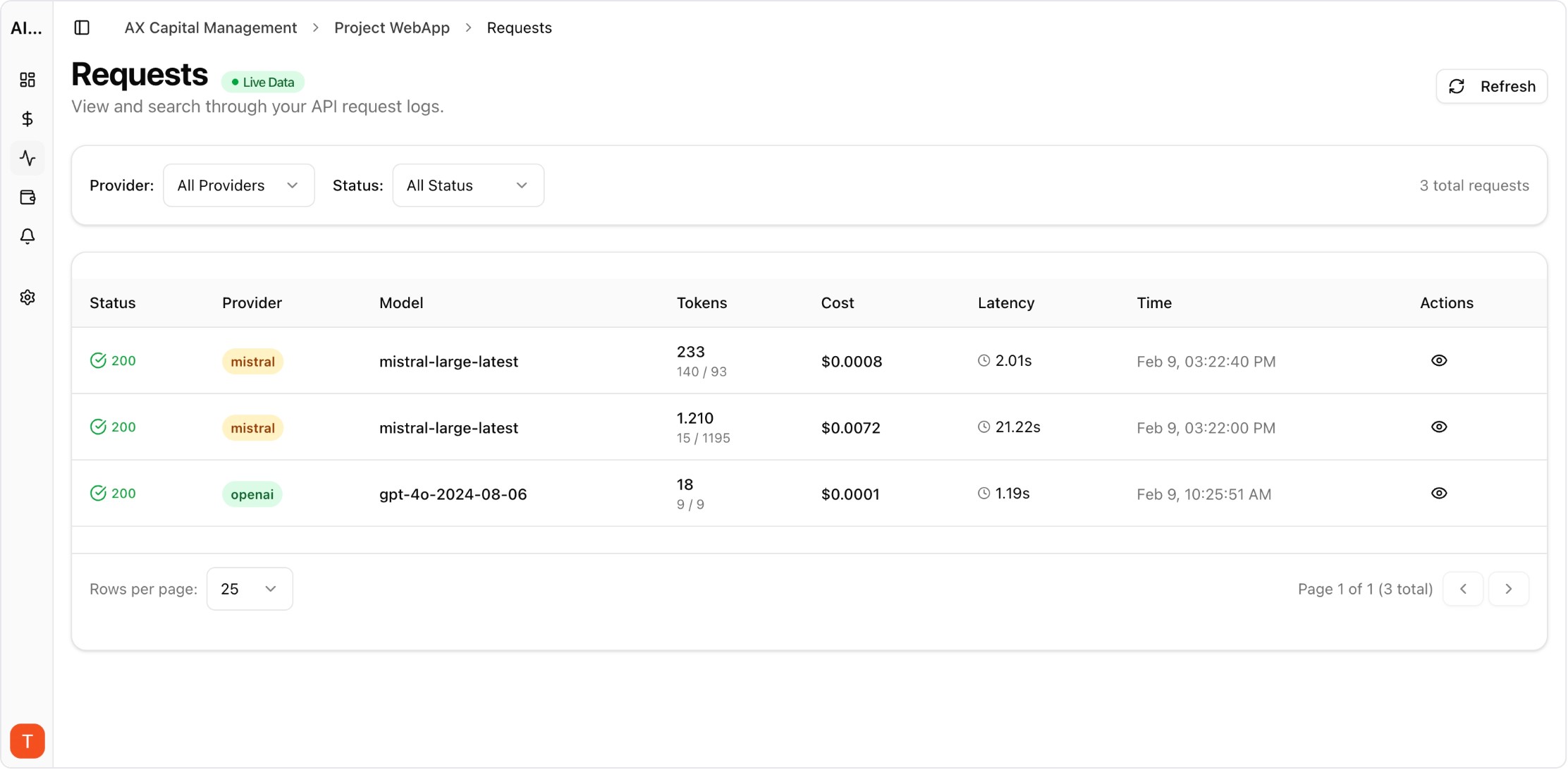

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

As AI features become mission-critical, reliability must be managed with explicit SLAs rather than intuition. Latency and error budgets give teams measurable boundaries for safe operation and controlled change.

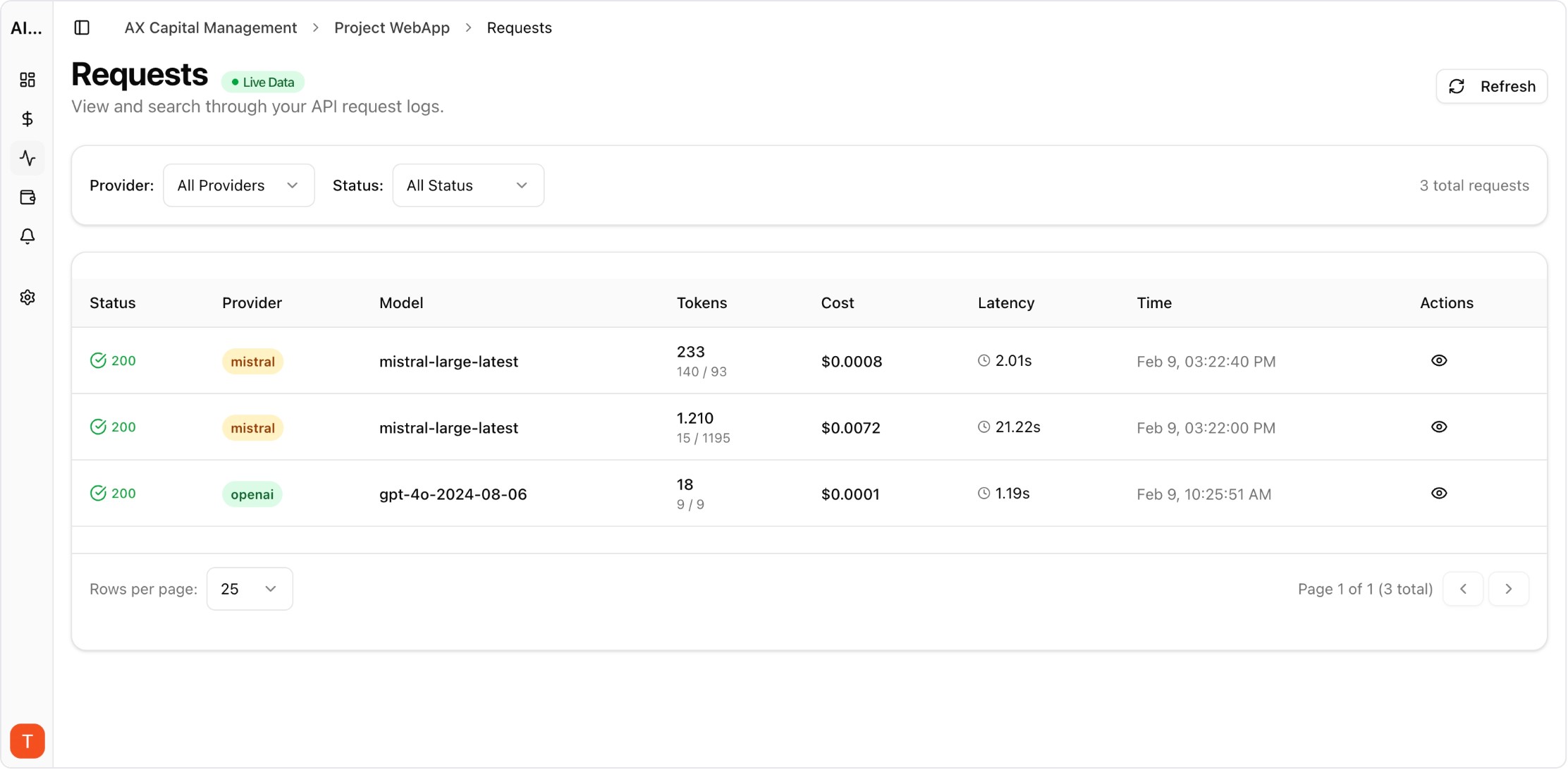

Real UI snapshot used to anchor the operational workflow described in this article.

Set SLOs for response time and success rate by key workflow, not just by provider API endpoint. User-facing SLOs align technical metrics with product expectations.

Budgets define how much degradation is acceptable within a period. Teams can spend budget deliberately during launches and then stabilize before breaching commitments.

Track how quickly budgets are consumed, especially during peak traffic and rollout windows. Burn-rate monitoring gives earlier warnings than end-of-period SLA summaries.

When latency budget burn accelerates, trigger predefined routing changes or model downgrades. Automated policy links prevent manual delays during reliability events.

Distinguish upstream provider issues from internal application regressions. Clear attribution speeds incident response and prevents incorrect mitigation actions.

Reliability tradeoffs affect roadmap pace. Regular cross-functional reviews keep launch ambition aligned with operational capacity and user commitments.

AI Observability Stack for SaaS Teams: What to Measure Beyond Tokens and Spend

observability · framework

Token Budgeting for RAG Systems: Control Context Size Without Losing Accuracy

cost-optimization · problem

Shadow Traffic Provider Evaluation: Compare LLM Providers Without User Risk

provider-strategy · problem

AI Cost Anomaly Detection Playbook for High-Volume LLM Products

observability · how-to

Latency and error budgets provide shared language for product and engineering decisions. Unified observability plus alerting helps teams maintain SLAs while still shipping quickly.