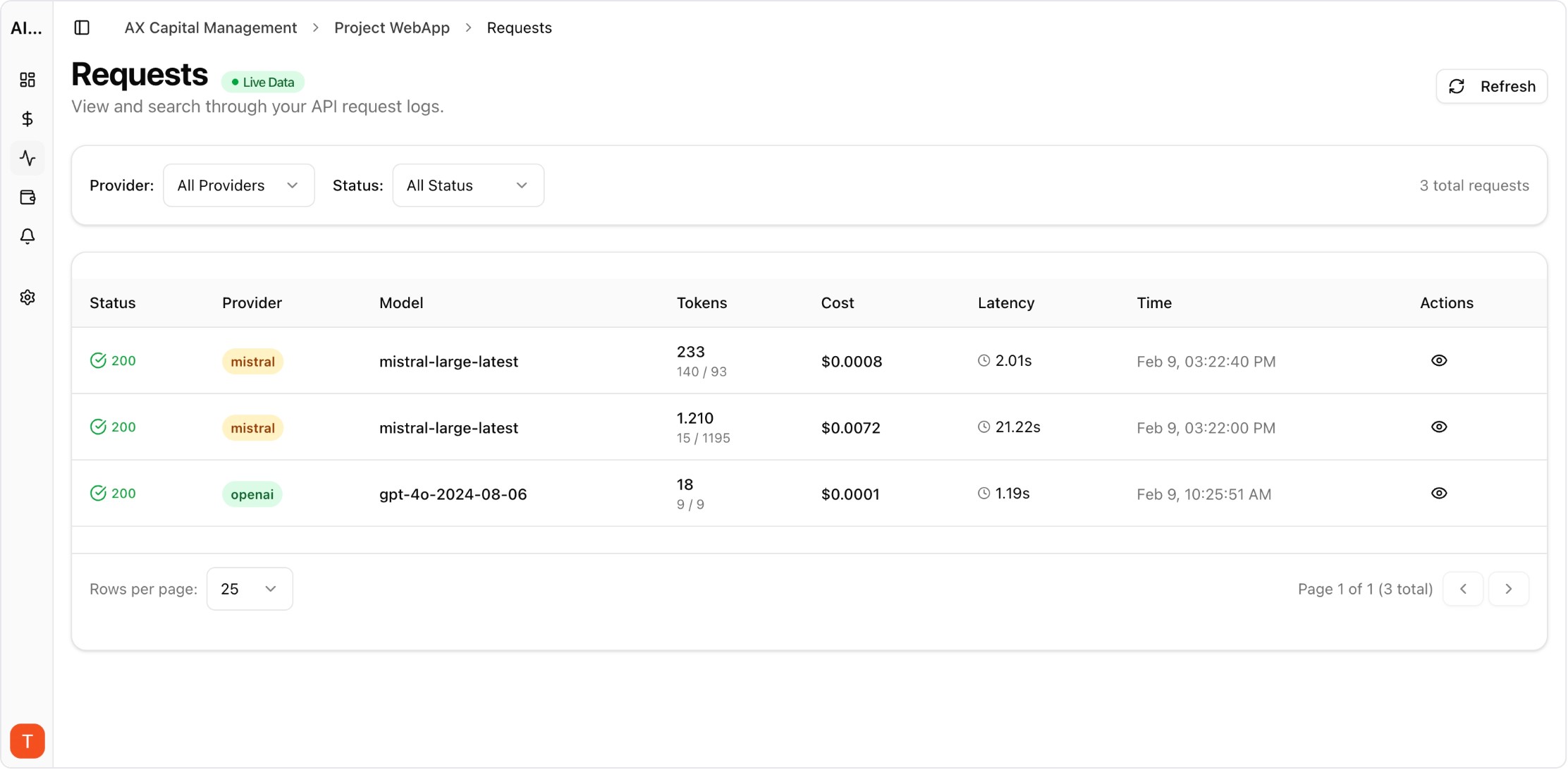

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

RAG systems often fail on economics before they fail on accuracy. Teams keep adding documents to context windows and spend rises faster than product value. Token budgeting creates explicit limits so retrieval stays useful, fast, and affordable.

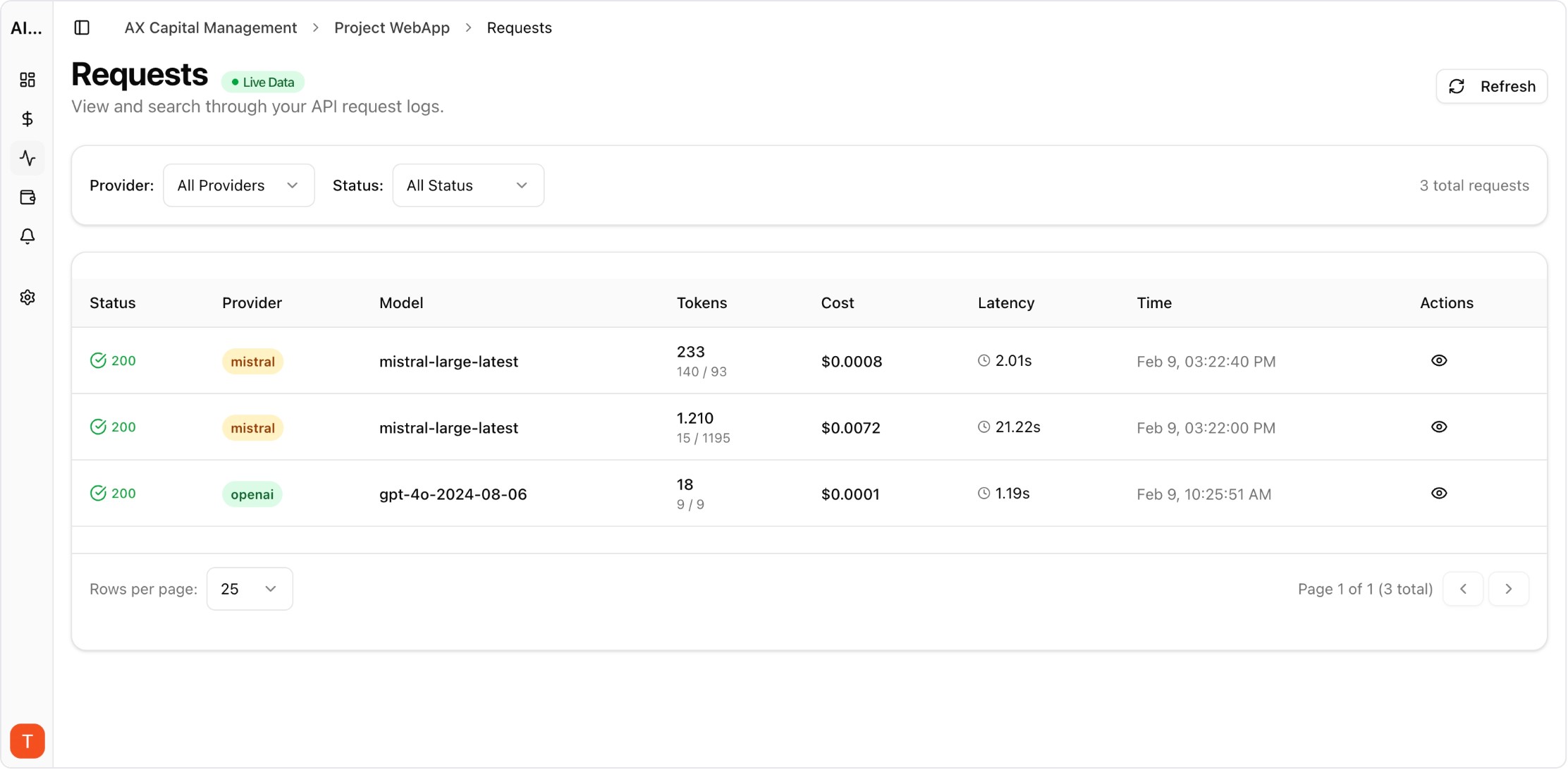

Real UI snapshot used to anchor the operational workflow described in this article.

Customer support, legal search, and analytics assistants have different context requirements. Define separate token ceilings for each workflow to avoid one oversized default policy.

Reserve token ranges for instructions, retrieved chunks, and answer generation. This prevents retrieval payloads from consuming all available context and forcing shallow final responses.

Apply score thresholds and deduplicate near-identical chunks before prompt assembly. High recall with low relevance leads to expensive context bloat that does not improve factual quality.

Pre-summarize long policy pages and keep canonical compressed artifacts. Sending compressed context for known document families can cut spend while preserving answer grounding.

Run benchmark questions at multiple token limits and compare answer utility. Teams should choose the smallest budget that meets quality targets, not the largest budget that feels safe.

Log prompt assembly stats and trigger alerts when requests exceed per-flow ceilings. Violations often indicate retrieval regressions, prompt drift, or accidental prompt duplication.

LLM Cost Optimization Guide: 11 Tactics to Reduce AI Spend Without Losing Quality

cost-optimization · framework

LLM Cost per Support Ticket: How to Track and Lower AI Service Margins

cost-optimization · commercial

AI Feature Unit Economics Framework for SaaS and Agency Teams

cost-optimization · framework

AI API Cost Allocation by Team: Build Ownership Across Engineering and Product

cost-optimization · commercial

Token budgets turn RAG from open-ended experimentation into controlled production engineering. Use routing and analytics to keep context efficient as your knowledge base grows.