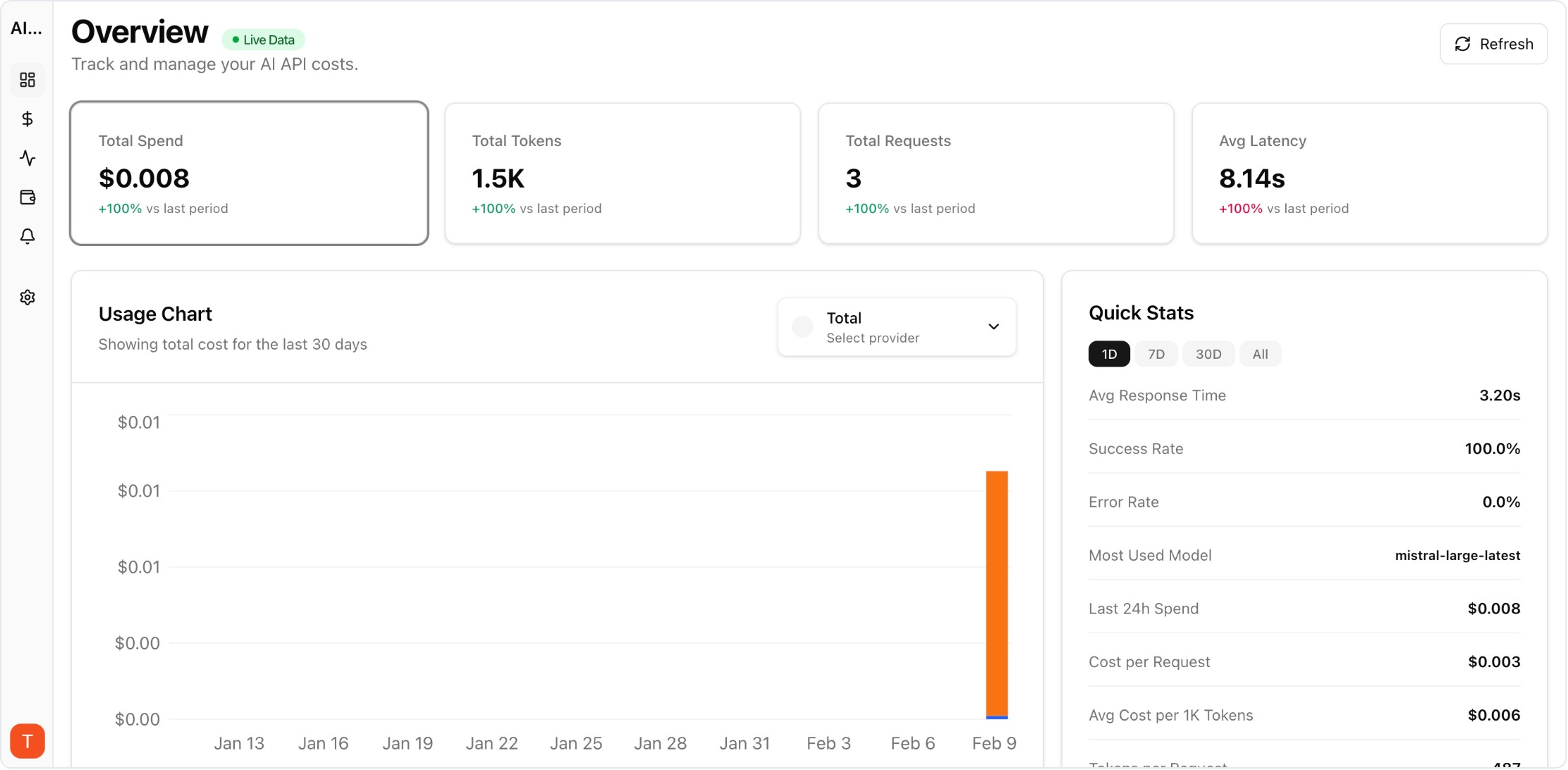

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

Provider decisions made only on sandbox tests often fail in production. Shadow traffic lets teams run realistic comparisons without exposing users to unstable behavior. It is one of the fastest ways to reduce migration risk and pricing uncertainty.

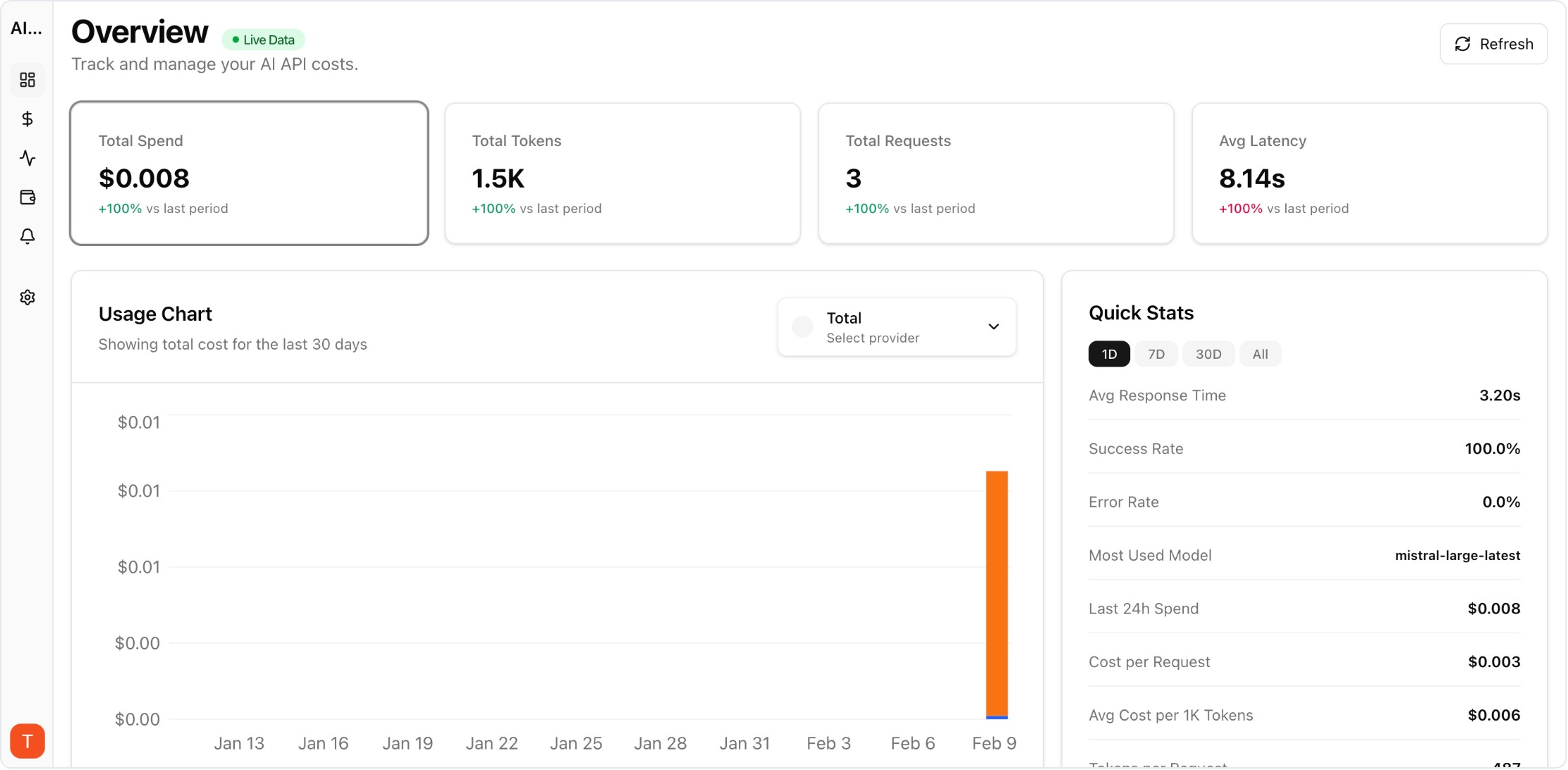

Real UI snapshot used to anchor the operational workflow described in this article.

Sample requests by endpoint, user tier, and language mix so shadow tests reflect real workload shape. Narrow traffic samples produce misleading benchmark results.

Use consistent prompt versions, retrieval context, and tool outputs for all providers. Without normalization, differences in setup can be mistaken for provider performance differences.

Do not rely on averages. Track p50, p95, and timeout rate per model-provider pair to understand tail behavior that impacts user experience during peak periods.

Create structured rubrics by use case, such as factual grounding, format compliance, and policy adherence. Quality scoring should be repeatable across evaluators and time.

Evaluate cost relative to successful outcomes, not raw request price. A cheaper model that needs extra retries or post-processing can be more expensive in practice.

Move from shadow to live traffic gradually: 5%, 20%, 50%, then full adoption. Staged ramps protect user experience while validating assumptions at increasing scale.

Multi-Provider LLM Strategy: How to Reduce Risk and Improve Uptime in Production

provider-strategy · how-to

Token Budgeting for RAG Systems: Control Context Size Without Losing Accuracy

cost-optimization · problem

LLM Retry Policy Cost Impact: How Backoff Rules Change Your AI Bill

provider-strategy · commercial

Model Downgrade Strategy During Peak Hours Without Breaking User Experience

provider-strategy · problem

Shadow traffic makes provider selection evidence-based. Combine benchmark dashboards with routing controls so you can switch providers with confidence instead of guesswork.