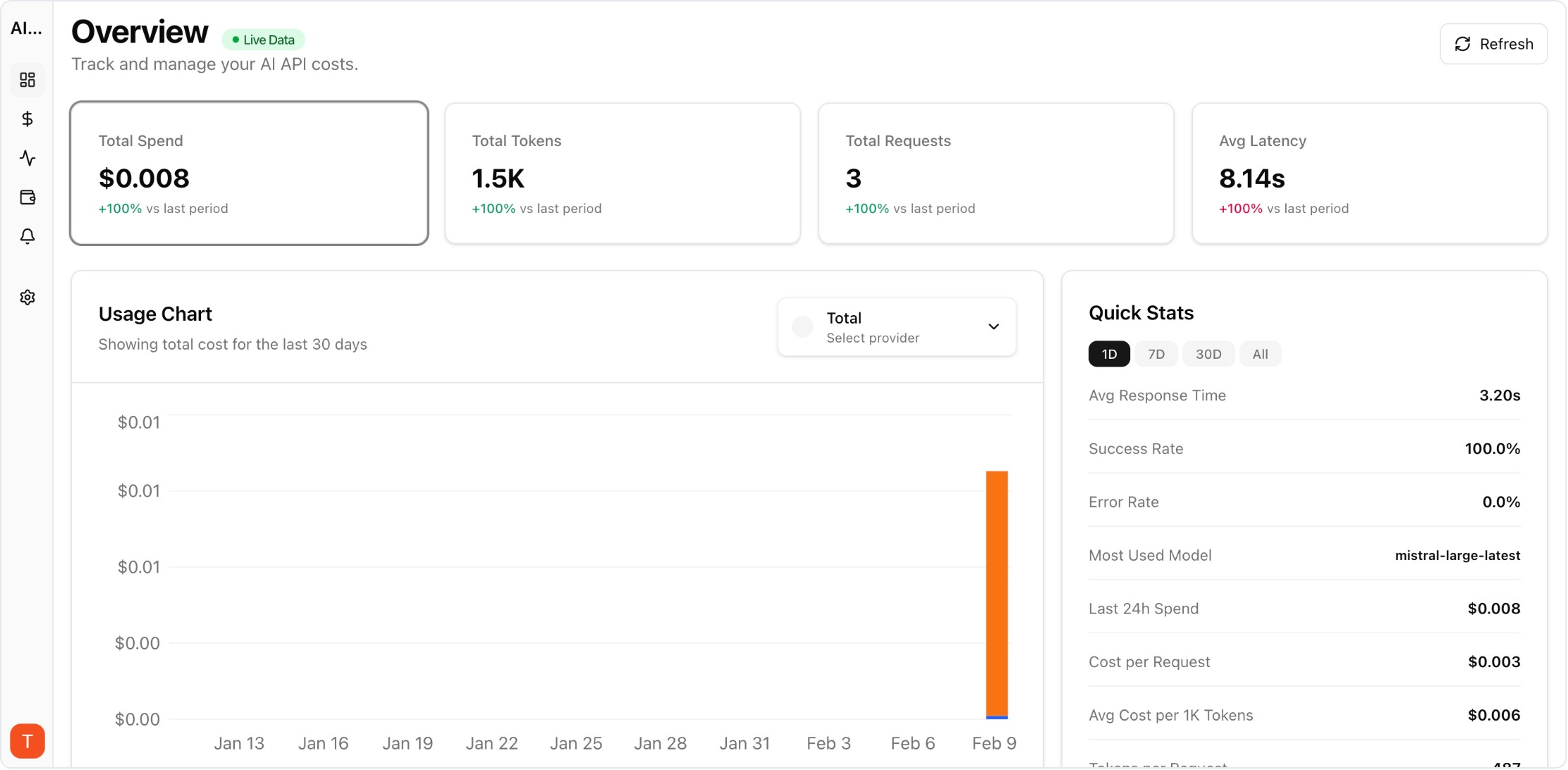

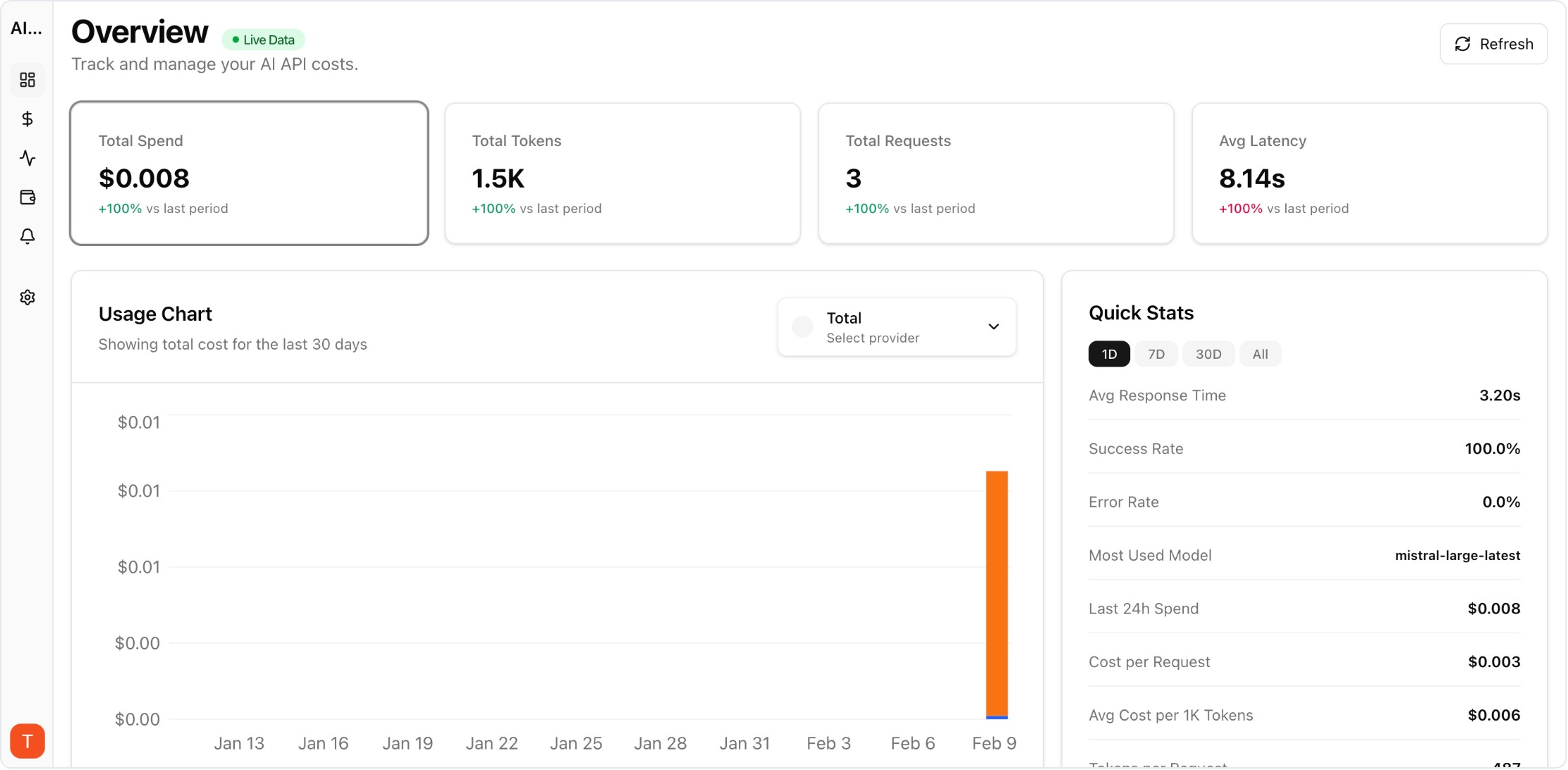

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

Mistral AI and xAI Grok represent growing alternatives to OpenAI and Anthropic. Mistral offers competitive European-hosted models with strong multilingual capabilities, while Grok provides high-performance reasoning at competitive prices. As teams adopt these providers alongside existing ones, cost monitoring across all providers becomes essential to prevent budget fragmentation.

Real UI snapshot used to anchor the operational workflow described in this article.

Adding new AI providers without cost monitoring creates blind spots. Teams often start with small experiments on Mistral or Grok, then gradually increase usage without tracking cumulative spend. Each provider has different pricing structures, billing cycles, and usage metrics. Centralized monitoring across all providers prevents the common problem of discovering unexpected charges at month-end.

Connect your Mistral API keys to AI Cost Board for automatic cost monitoring. Track per-model costs for Mistral Large, Mistral Small, and specialized models. Set up project-level attribution to understand which applications or teams drive Mistral usage. Configure budget alerts specific to your Mistral spend to catch anomalies early.

Monitor xAI Grok API usage by connecting your API keys. Track costs for Grok 2 and Grok 2 Mini separately to understand cost-performance tradeoffs. Compare Grok pricing against equivalent models from other providers to validate your model selection strategy. Set up alerts for unexpected usage spikes.

Mistral Large competes with GPT-4o and Claude Sonnet on quality at competitive pricing. Mistral Small targets the GPT-4o-mini tier. Grok 2 positions between mid-tier and premium models. Use AI Cost Board dashboards to compare actual per-request costs across providers and identify the most cost-effective model for each use case.

Best practices for managing costs across multiple providers: (1) Use separate API keys per project or team for attribution. (2) Set provider-level budget alerts to catch spending anomalies. (3) Review cross-provider cost comparisons weekly. (4) Document which models are approved for which use cases. (5) Consolidate reporting in a single dashboard for finance visibility.

Multi-Provider LLM Strategy: How to Reduce Risk and Improve Uptime in Production

provider-strategy · how-to

AI Cost Anomaly Detection Playbook for High-Volume LLM Products

observability · how-to

Expensive Prompt Red Team Checklist: Find Cost Risks Before Production

governance · how-to

How to Track LLM API Costs Across Multiple Providers

cost-tracking · how-to

As the AI provider landscape expands, cost monitoring must keep pace. Centralized tracking across Mistral, Grok, and your existing providers prevents budget fragmentation and enables informed model selection.