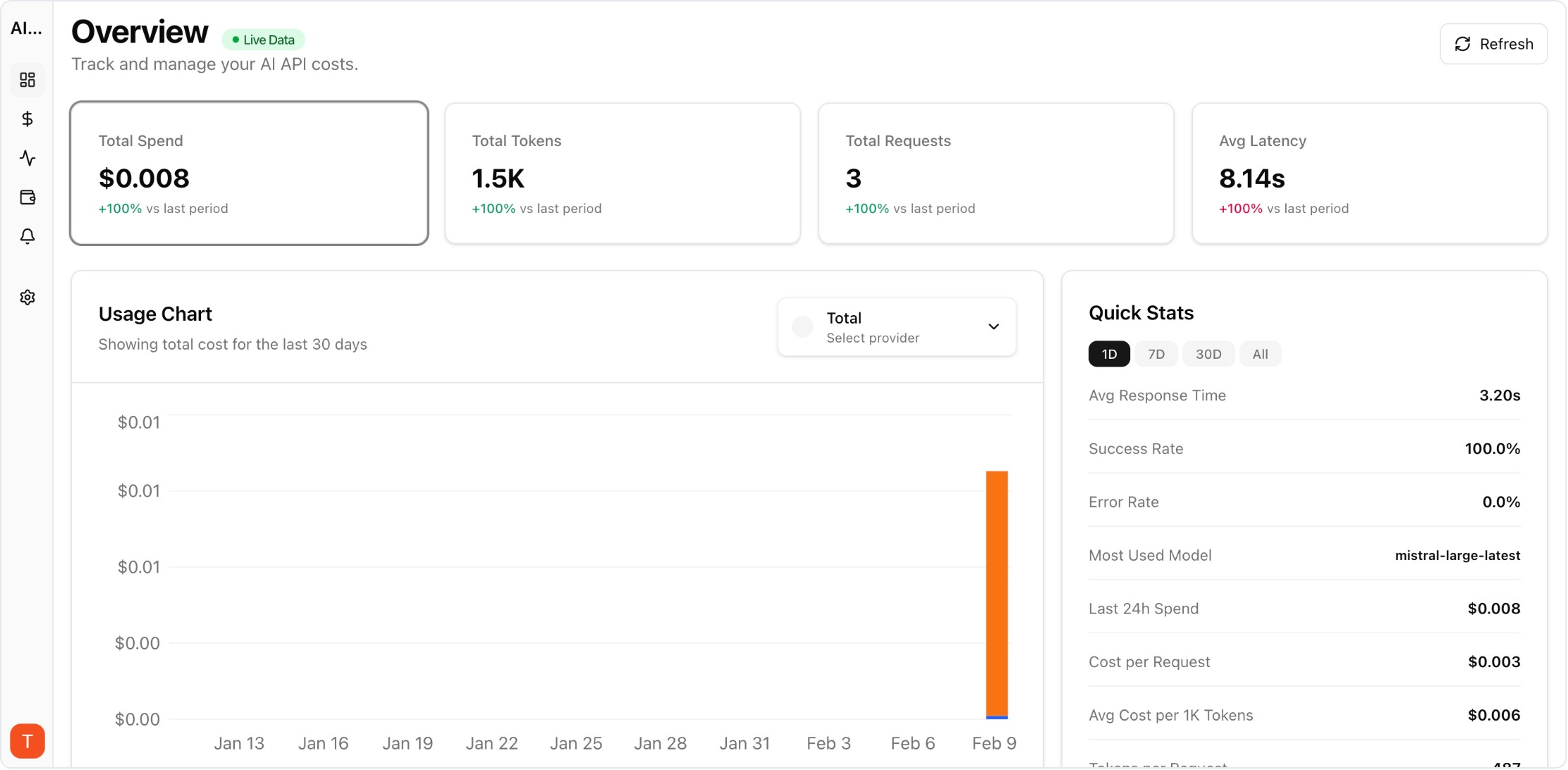

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

AWS Bedrock and Google Vertex AI provide managed access to top LLM models within cloud ecosystems. While this simplifies deployment, it complicates cost tracking — AI costs are buried within larger cloud bills alongside compute, storage, and networking charges. Extracting and monitoring AI-specific costs requires dedicated tooling and governance practices.

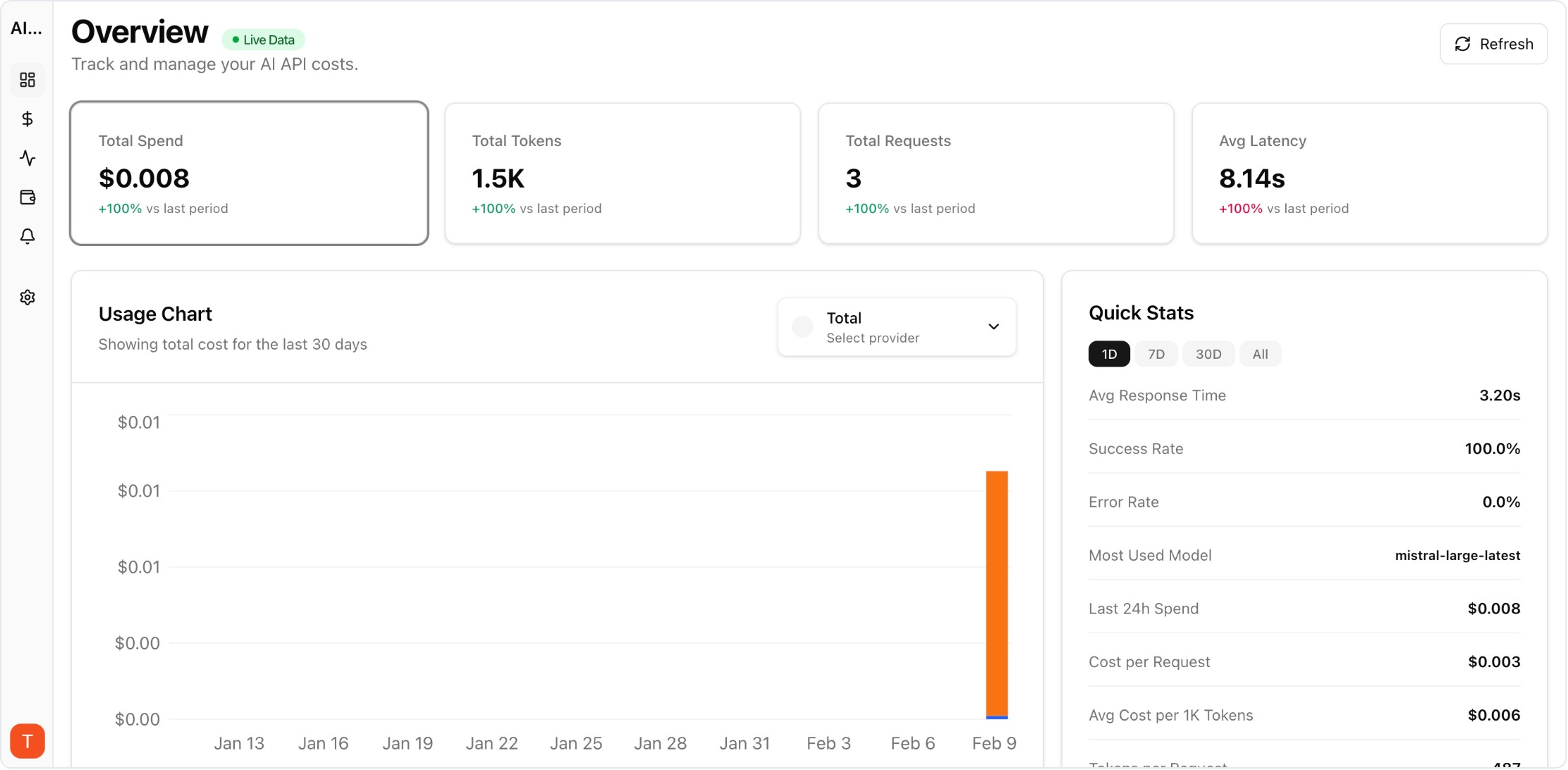

Real UI snapshot used to anchor the operational workflow described in this article.

Cloud AI platforms like Bedrock and Vertex AI bill through the cloud provider billing system. AI costs appear as line items within massive cloud bills, making it difficult to isolate LLM spend from other services. Different models have different pricing within the same platform. And cross-cloud cost comparison (Bedrock vs Vertex vs direct API) requires normalizing pricing across three different billing systems.

AWS Bedrock charges per input/output token with pricing varying by model (Claude, Llama, Mistral on Bedrock). Set up AWS Cost Explorer tags to isolate Bedrock spend. Create dedicated IAM roles per application for cost attribution. Monitor throughput vs on-demand pricing differences. Connect to AI Cost Board for cross-platform cost comparison with direct API costs.

Vertex AI pricing includes per-character or per-token charges depending on the model (Gemini, PaLM). Use Google Cloud billing exports to BigQuery for detailed cost analysis. Set up budget alerts in Google Cloud Console for Vertex AI services. Compare Vertex AI Gemini pricing against direct Gemini API pricing to ensure you are getting the best rate for your usage pattern.

Managed platforms (Bedrock, Vertex) often cost more per token than direct API access, but offer benefits: VPC integration, compliance certifications, unified billing, and SLA guarantees. The premium is worth it for enterprise compliance requirements but not for cost-sensitive startups. Monitor both options side-by-side with AI Cost Board to quantify the managed platform premium.

Organizations using multiple cloud AI platforms need unified governance: (1) Establish a single dashboard for all AI costs across Bedrock, Vertex, Azure OpenAI, and direct APIs. (2) Set budget alerts per platform and per application. (3) Compare model costs across platforms to optimize routing. (4) Generate consolidated reports for finance teams showing total AI spend regardless of platform.

Multi-Provider LLM Strategy: How to Reduce Risk and Improve Uptime in Production

provider-strategy · how-to

AI Cost Anomaly Detection Playbook for High-Volume LLM Products

observability · how-to

Expensive Prompt Red Team Checklist: Find Cost Risks Before Production

governance · how-to

How to Track LLM API Costs Across Multiple Providers

cost-tracking · how-to

Cloud AI platforms simplify deployment but complicate cost tracking. Dedicated monitoring across Bedrock, Vertex, and direct APIs gives you the visibility needed for informed cost governance.