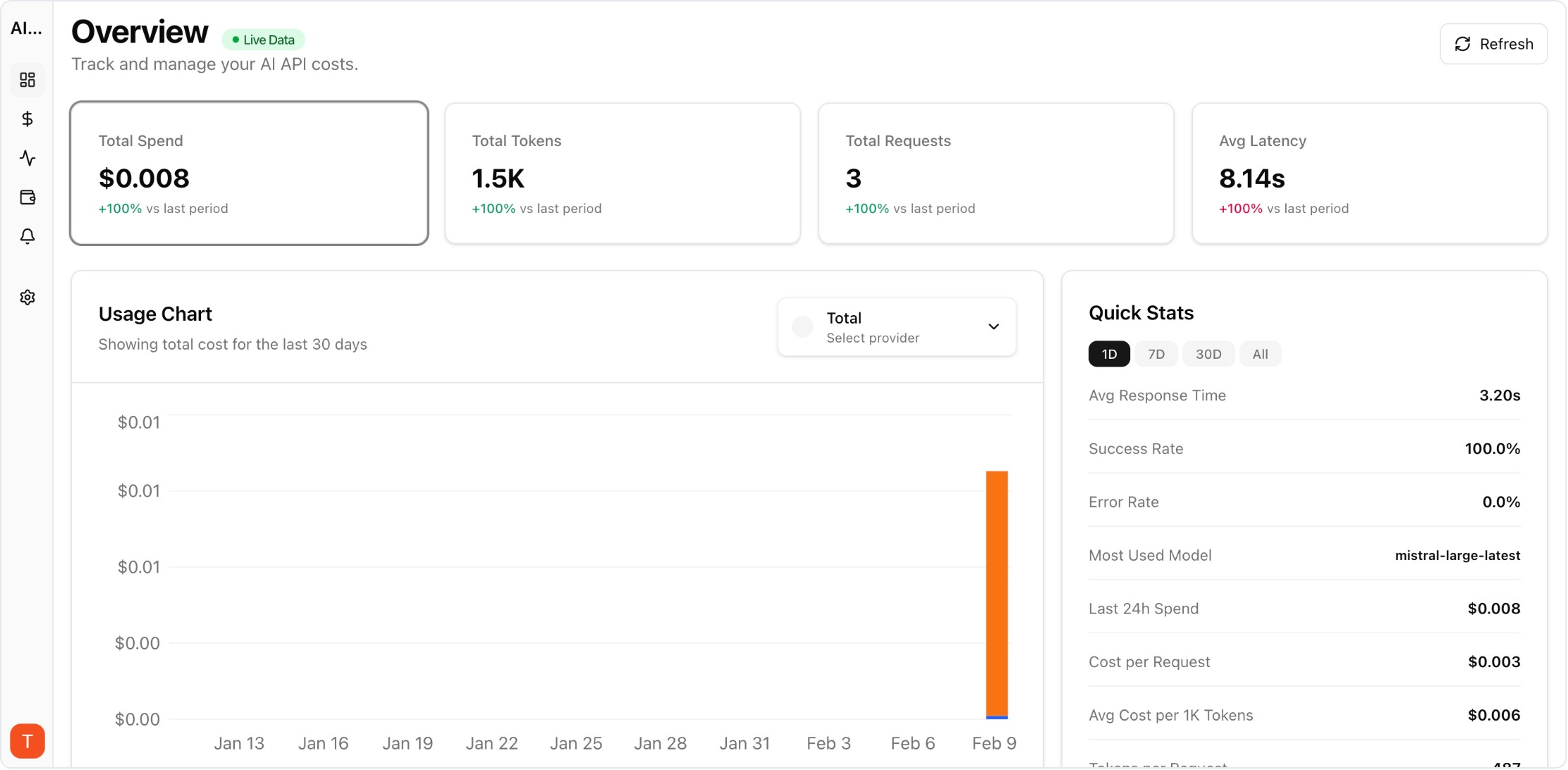

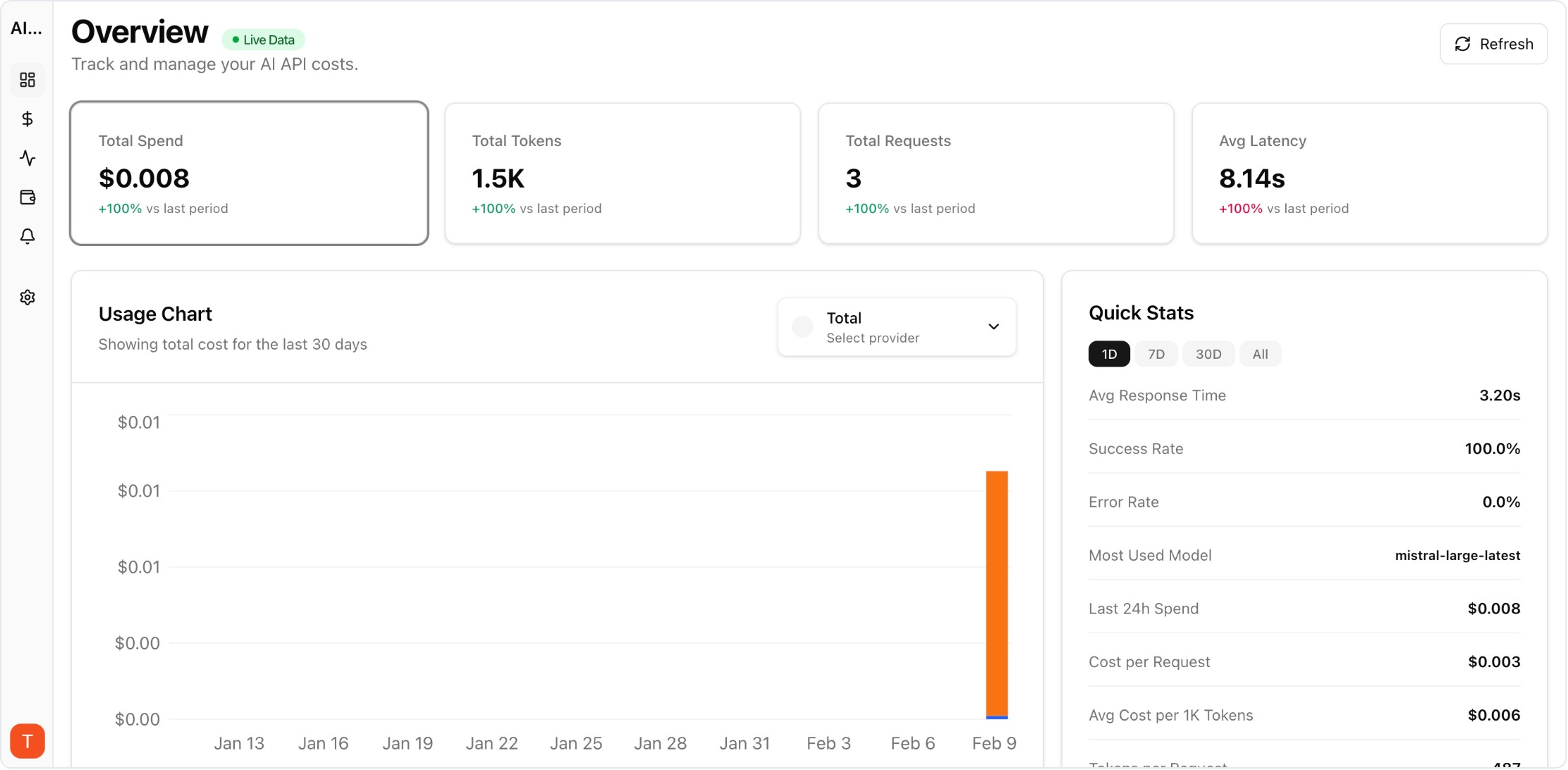

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

AI gateways and LLM proxies sit between your application and LLM providers, adding features like load balancing, caching, and unified logging. But they also add latency, complexity, and another dependency. The decision between gateway and direct API integration depends on your scale, provider count, and feature requirements. Here is a practical framework for choosing the right approach.

Real UI snapshot used to anchor the operational workflow described in this article.

An AI gateway (or LLM proxy) is a middleware layer that intercepts LLM API calls. Instead of calling OpenAI directly, your application calls the gateway, which forwards requests to the provider. This interception point enables features like load balancing across providers, request caching, rate limiting, automatic retries, and centralized logging. Popular gateways include LiteLLM, Portkey, and Cloudflare AI Gateway.

Direct API integration is simpler and adds zero latency overhead. Choose direct API when: you use a single LLM provider, your application has straightforward API call patterns, you want minimal infrastructure dependencies, or you are in early development and speed matters most. Direct API calls also avoid the operational overhead of maintaining gateway infrastructure.

Gateways become valuable when: you use multiple LLM providers and want unified API access, you need automatic failover between providers, you want request-level caching to reduce costs, you require centralized rate limiting and access controls, or you need a unified logging layer for all LLM interactions. The gateway essentially becomes your LLM infrastructure layer.

Whether you use a gateway or direct API, cost monitoring is essential. AI Cost Board works with both approaches — it monitors costs at the API key level, regardless of whether requests flow through a gateway or directly to providers. This means you can add cost governance, budget alerts, and spend attribution without changing your architecture.

Top AI gateways by category: (1) Open-source self-hosted: LiteLLM — 100+ LLMs, unified API, self-hosted. (2) Managed enterprise: Portkey — 1600+ LLMs, guardrails, compliance. (3) Edge-based: Cloudflare AI Gateway — caching, rate limiting, edge deployment. (4) Developer-first: Helicone — simple one-line proxy, analytics. Each has different strengths depending on your scale and requirements.

LLM Cost Optimization Guide: 11 Tactics to Reduce AI Spend Without Losing Quality

cost-optimization · framework

AI Observability Stack for SaaS Teams: What to Measure Beyond Tokens and Spend

observability · framework

Multi-Provider LLM Strategy: How to Reduce Risk and Improve Uptime in Production

provider-strategy · how-to

AI Feature Unit Economics Framework for SaaS and Agency Teams

cost-optimization · framework

The gateway vs direct API decision should be driven by your actual requirements, not trends. Start direct, add a gateway when the benefits clearly outweigh the operational overhead.