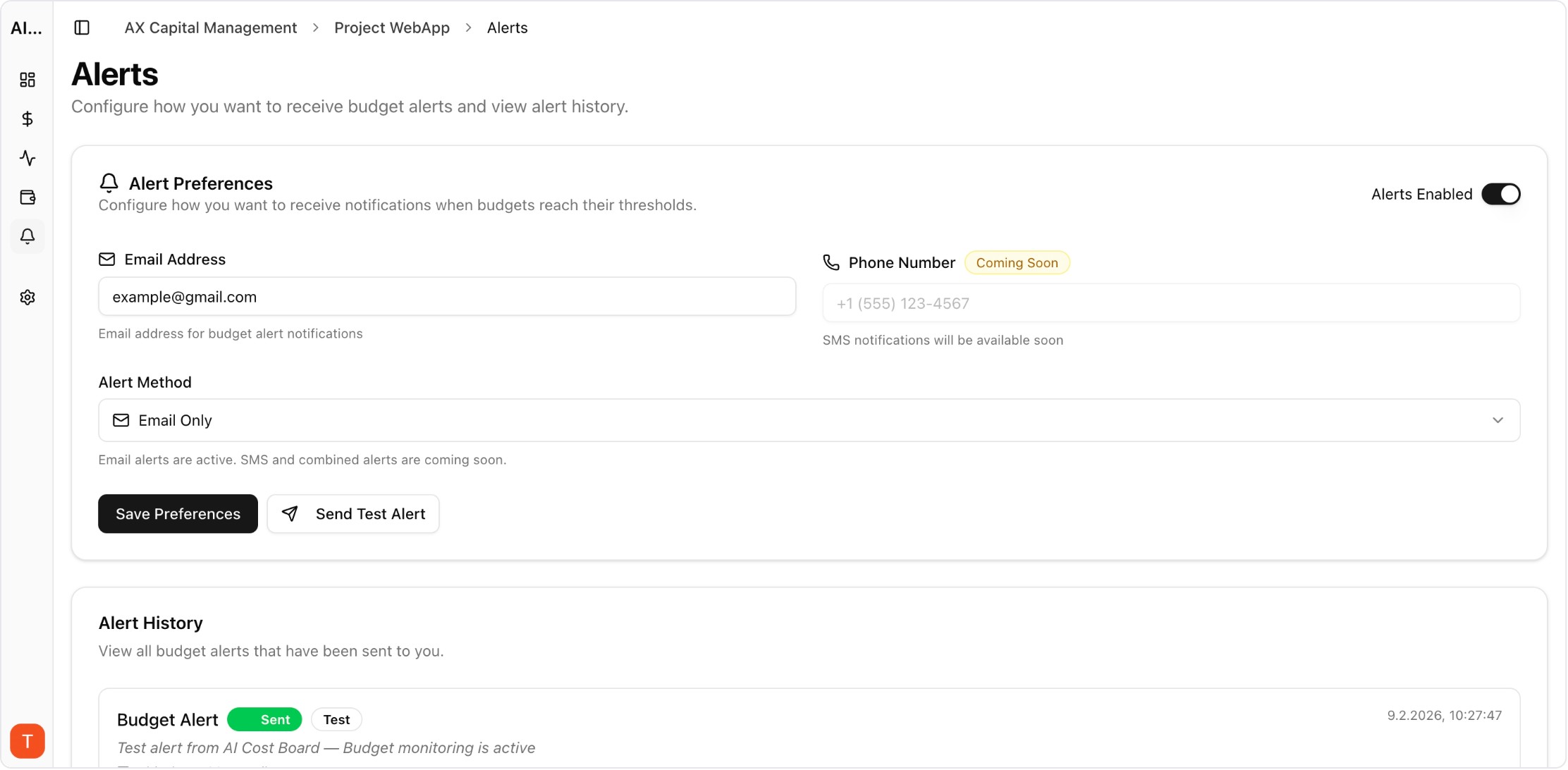

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

The self-hosted vs cloud debate for LLM monitoring tools involves more nuance than a simple feature comparison. Self-hosted tools like Langfuse and LiteLLM offer control and customization. Cloud-managed tools like AI Cost Board and Helicone offer zero-maintenance deployment. The right choice depends on your team size, infrastructure expertise, compliance requirements, and total cost of ownership tolerance.

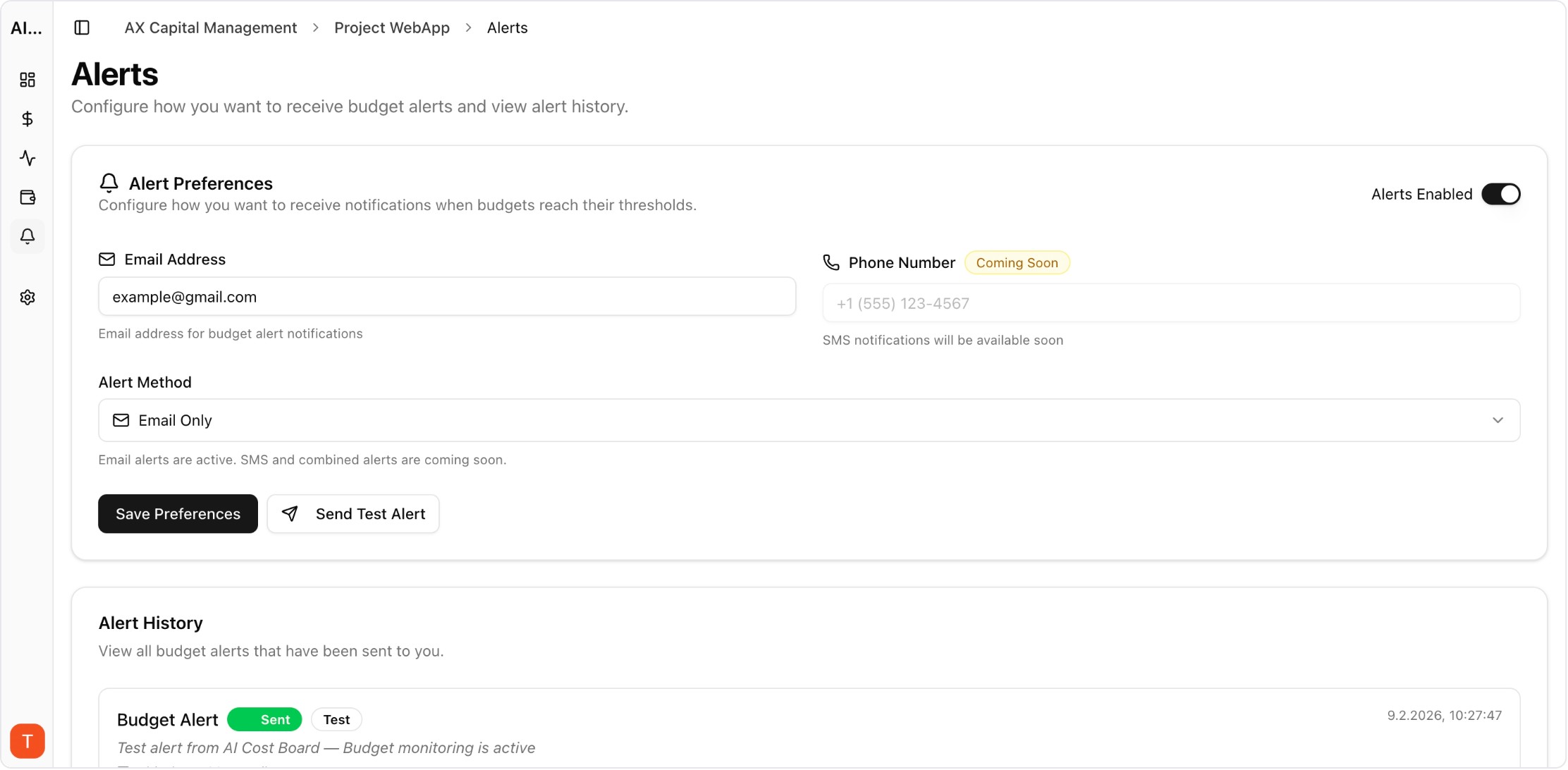

Real UI snapshot used to anchor the operational workflow described in this article.

Self-hosted tools appear free but carry hidden costs: server infrastructure ($100-500/month for production-grade hosting), engineering time for setup and maintenance (40-80 hours initially, 5-10 hours/month ongoing), security patching and updates, database management and scaling, and backup/disaster recovery. Total cost of ownership for self-hosted monitoring typically exceeds managed solutions once you factor in engineering time.

Cloud-managed tools handle infrastructure, scaling, security, backups, and updates. You connect via API key and get immediate monitoring capabilities. The tradeoffs are: vendor dependency, less customization, data leaves your infrastructure, and monthly subscription costs. AI Cost Board, for example, starts at $9.99/month with zero infrastructure requirements.

Self-hosted is the right choice when: (1) regulatory requirements mandate that LLM request data stays on-premise, (2) your team has dedicated DevOps/platform engineers with capacity, (3) you need deep customization of the monitoring tool itself, or (4) you are already running Kubernetes infrastructure and adding another service is incremental. If none of these apply, cloud-managed is usually more cost-effective.

Cloud-managed monitoring fits when: (1) your team wants to focus on product, not monitoring infrastructure, (2) you need monitoring up and running in minutes, not weeks, (3) engineering time is more expensive than subscription costs, or (4) you want guaranteed uptime, security updates, and scaling without operational burden. This describes most startups and mid-size engineering teams.

Yes. A common hybrid approach uses a self-hosted proxy (like LiteLLM) for request routing while sending cost and usage data to a cloud monitoring tool (like AI Cost Board) for governance and reporting. This preserves infrastructure control for request handling while offloading monitoring complexity to a managed service.

LLM Cost Optimization Guide: 11 Tactics to Reduce AI Spend Without Losing Quality

cost-optimization · framework

AI Observability Stack for SaaS Teams: What to Measure Beyond Tokens and Spend

observability · framework

Multi-Provider LLM Strategy: How to Reduce Risk and Improve Uptime in Production

provider-strategy · how-to

AI Feature Unit Economics Framework for SaaS and Agency Teams

cost-optimization · framework

Choose based on your team constraints, not ideology. Most teams benefit from cloud-managed monitoring for cost governance and only self-host when specific compliance or customization requirements demand it.