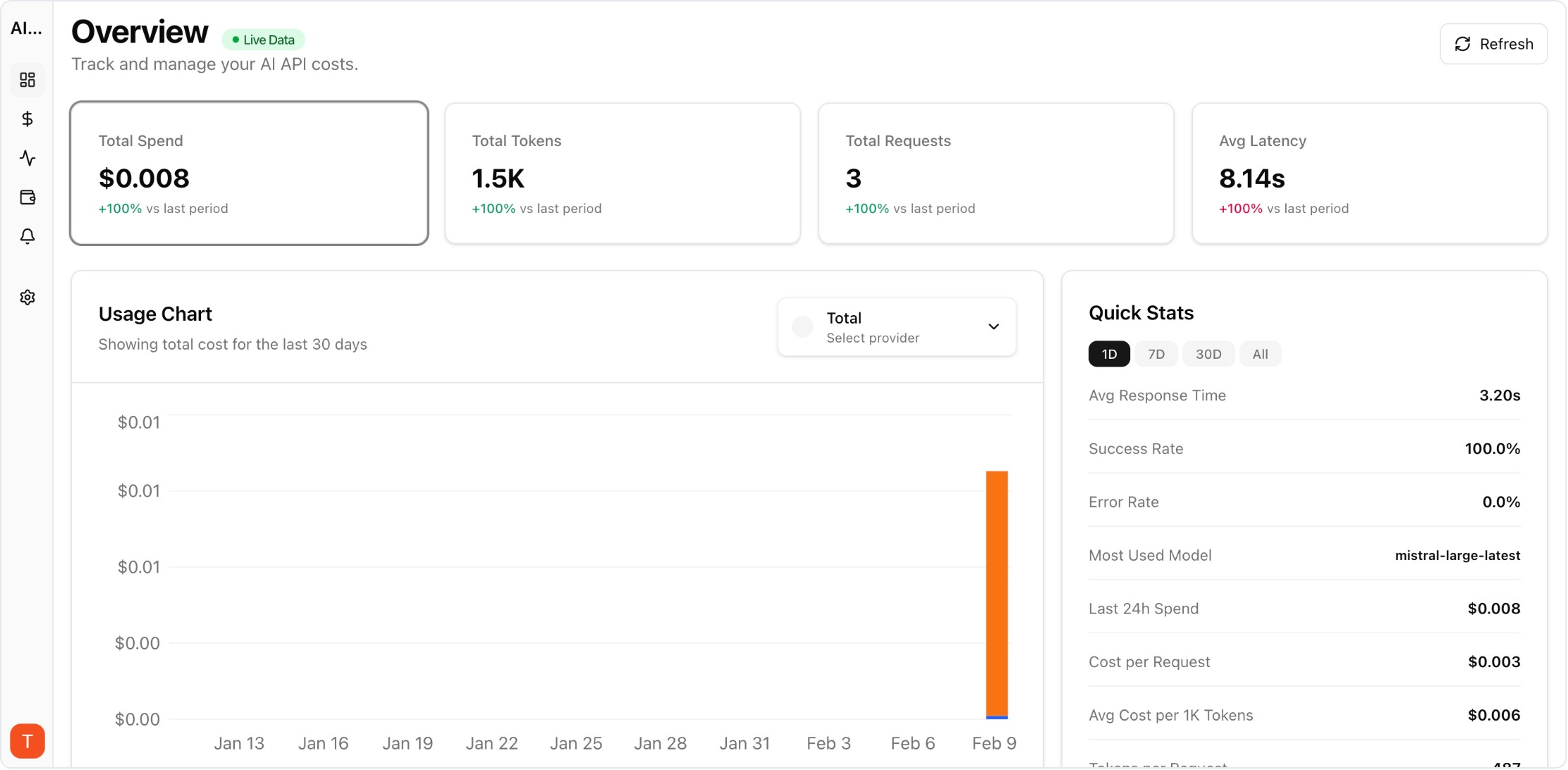

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

Routing policies often start as engineering intuition and remain unchanged long after workloads evolve. A benchmark framework creates a repeatable way to update routing decisions using current evidence across cost, latency, and quality.

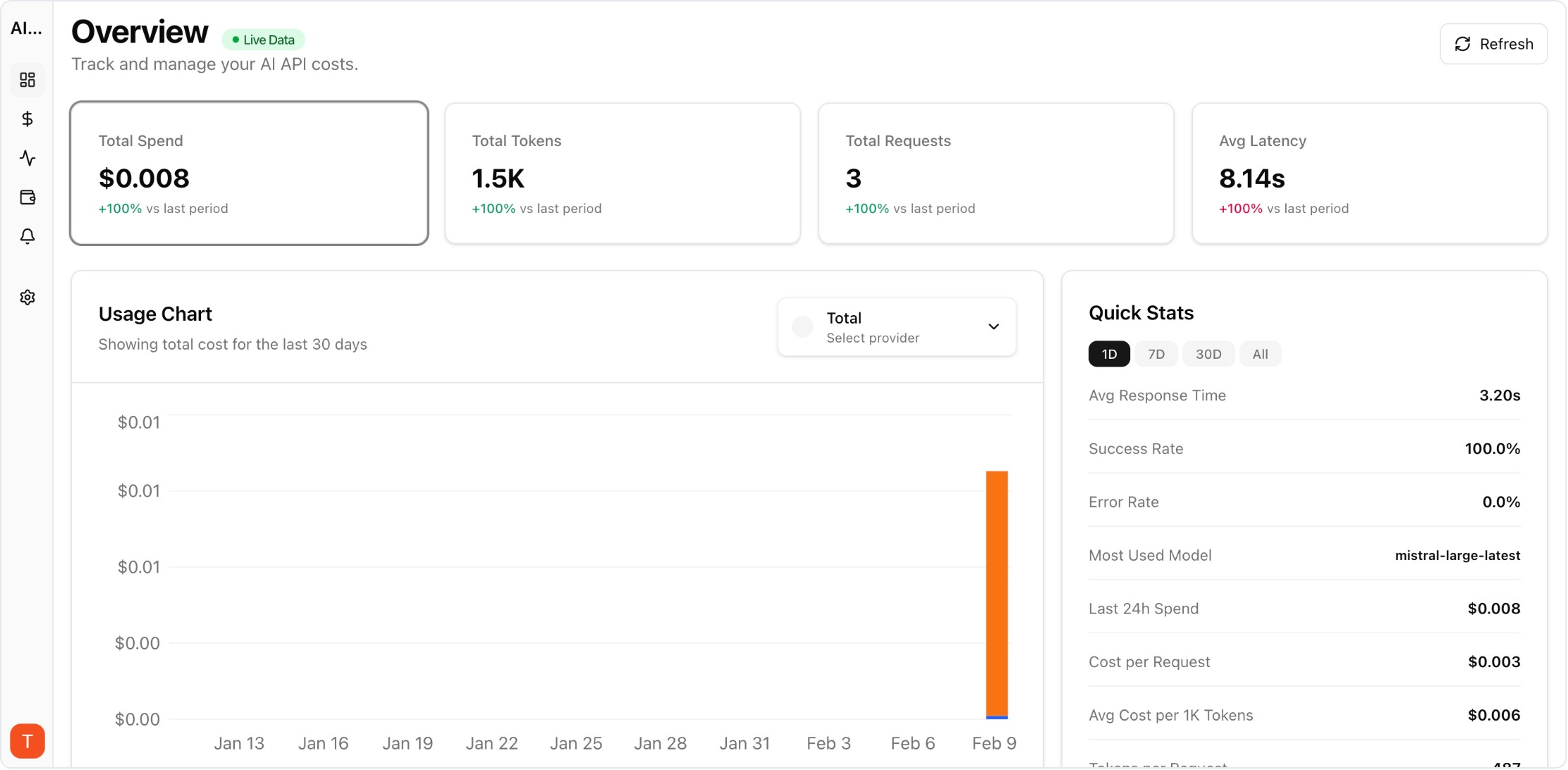

Real UI snapshot used to anchor the operational workflow described in this article.

Sample tasks by workflow type, language, and complexity to reflect real demand. Overly narrow benchmark sets overfit routing policies to artificial conditions.

Use structured scoring rubrics with clear pass-fail thresholds for formatting, factuality, and policy adherence. Consistent criteria reduce evaluator variance.

Collect percentile latency and timeout frequency for each model-provider pair. Tail latency drives user frustration and should influence routing decisions directly.

Compare cost per successful high-quality response rather than raw per-token pricing. This metric reflects practical value delivered to users.

Run policy simulations for peak and off-peak periods before deployment. Simulations expose tradeoffs between budget efficiency and reliability resilience.

Re-run benchmarks after prompt revisions, model updates, or provider incidents. Continuous benchmarking keeps routing policies aligned with current operating conditions.

LLM Cost Optimization Guide: 11 Tactics to Reduce AI Spend Without Losing Quality

cost-optimization · framework

AI Observability Stack for SaaS Teams: What to Measure Beyond Tokens and Spend

observability · framework

Multi-Provider LLM Strategy: How to Reduce Risk and Improve Uptime in Production

provider-strategy · how-to

AI Feature Unit Economics Framework for SaaS and Agency Teams

cost-optimization · framework

Benchmark-driven routing reduces both risk and guesswork. With consistent evaluations, teams can update provider strategy quickly while protecting quality and budget targets.