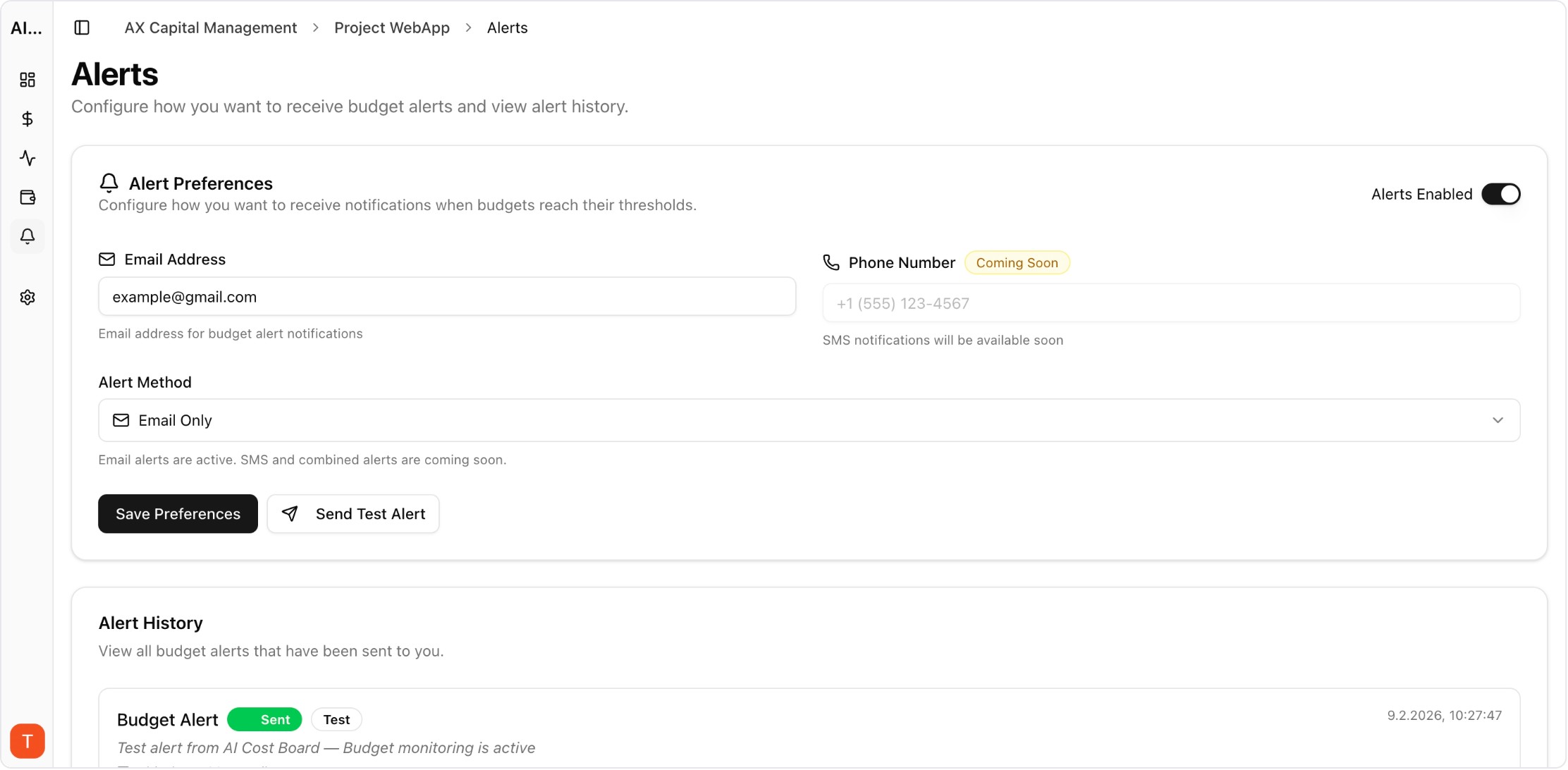

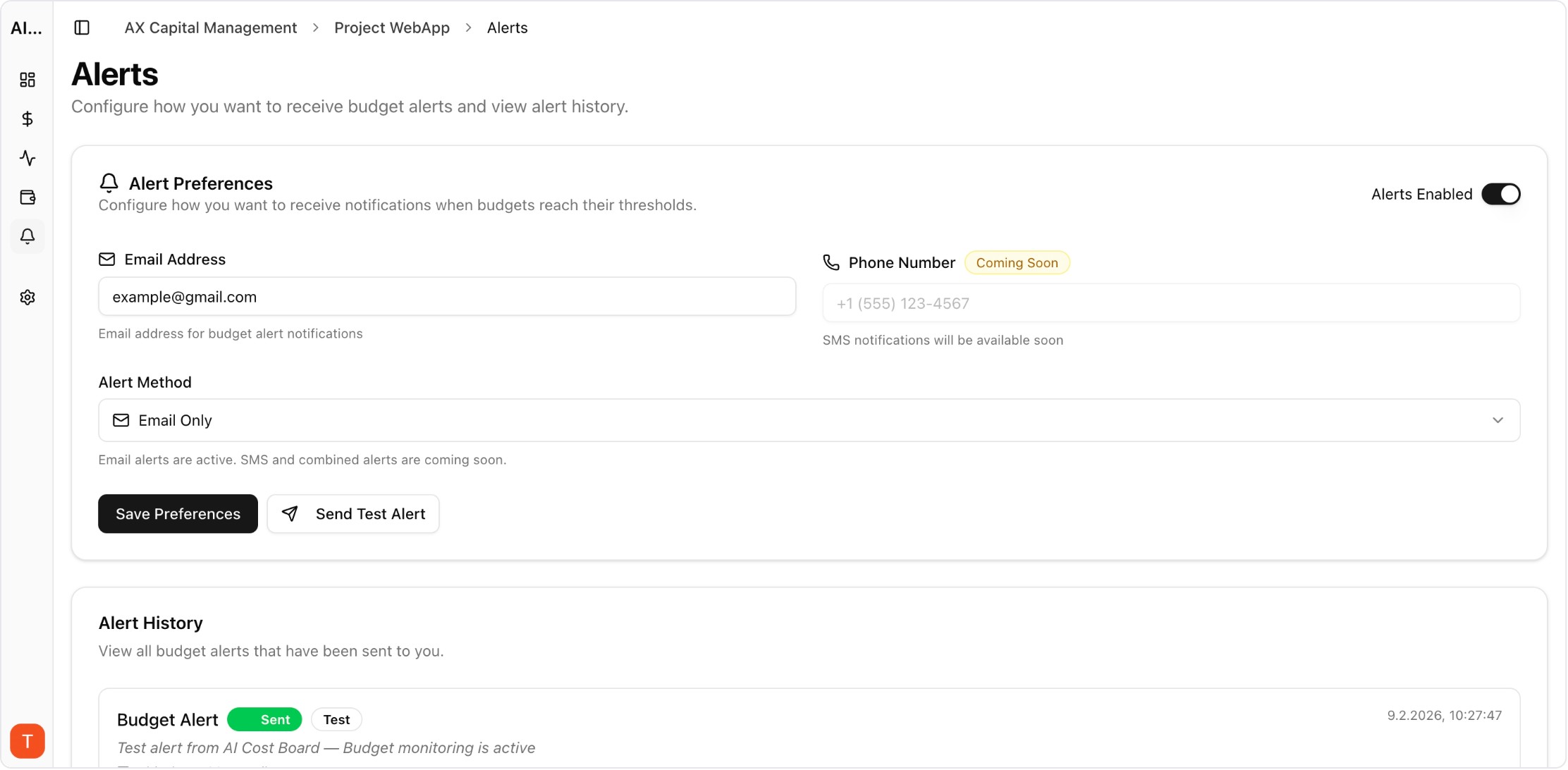

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

AI agents running multi-step workflows present the hardest cost monitoring challenge in production AI. A single agent invocation can trigger dozens of LLM calls across multiple models, with costs varying wildly based on task complexity. Traditional per-request monitoring breaks down when one user action generates an unpredictable chain of API calls. This guide covers strategies for tracking, attributing, and controlling agent workflow costs.

Real UI snapshot used to anchor the operational workflow described in this article.

Unlike single-shot API calls, agent workflows are non-deterministic in both steps and cost. An agent might solve a task in 3 LLM calls or 30, depending on complexity, tool availability, and retry logic. This variance makes traditional budgeting unreliable. Teams need per-workflow cost tracking with statistical baselines to distinguish normal variance from genuine anomalies.

Tag every LLM API call with a workflow ID, step number, and agent identifier. This creates a cost trace that maps individual requests back to the initiating workflow. Use project workspaces in your monitoring tool to group related workflows. AI Cost Board supports metadata tagging on API keys to enable workflow-level cost attribution automatically.

Implement three layers of budget control: (1) per-workflow maximum — kill agents that exceed a cost ceiling per execution. (2) per-user daily budget — prevent any single user from generating unlimited agent costs. (3) per-project monthly budget — enforce organizational spending limits. These guardrails prevent the most common agent cost incidents: infinite loops and excessive retry chains.

Build baseline cost distributions for each workflow type. Alert when individual executions exceed 3x the median cost or when aggregate daily spend deviates more than 2 standard deviations from the rolling average. Anomaly detection for agents should be more sensitive than for single-shot APIs because the cost variance and potential for runaway spending is much higher.

Three optimization strategies work well for agents: (1) Use cheaper models for intermediate reasoning steps and reserve expensive models for final outputs. (2) Implement step caching so agents do not re-derive information they have already computed. (3) Set maximum step counts per workflow to prevent infinite chains. These reduce costs 30-50% without meaningfully impacting agent task completion.

LLM Cost Optimization Guide: 11 Tactics to Reduce AI Spend Without Losing Quality

cost-optimization · framework

AI Observability Stack for SaaS Teams: What to Measure Beyond Tokens and Spend

observability · framework

AI Feature Unit Economics Framework for SaaS and Agency Teams

cost-optimization · framework

AI Cost Anomaly Detection Playbook for High-Volume LLM Products

observability · how-to

Agent cost monitoring requires a fundamentally different approach than single-request tracking. Build attribution, guardrails, and anomaly detection into your agent framework from the start.