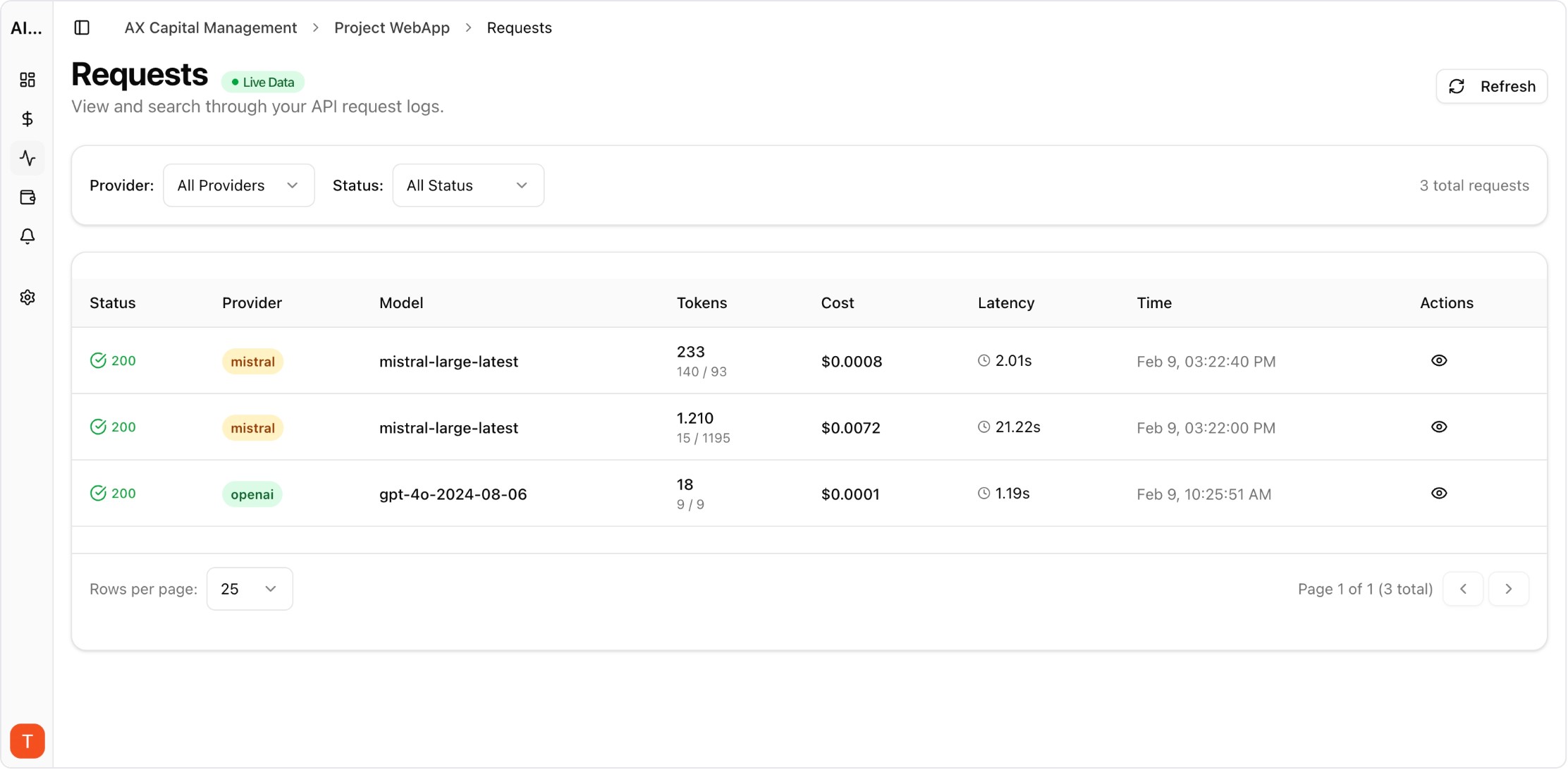

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

Multi-provider architecture improves resilience, but budgeting often remains single-provider and reactive. A unified budget model helps teams avoid hidden overspend when traffic shifts between providers.

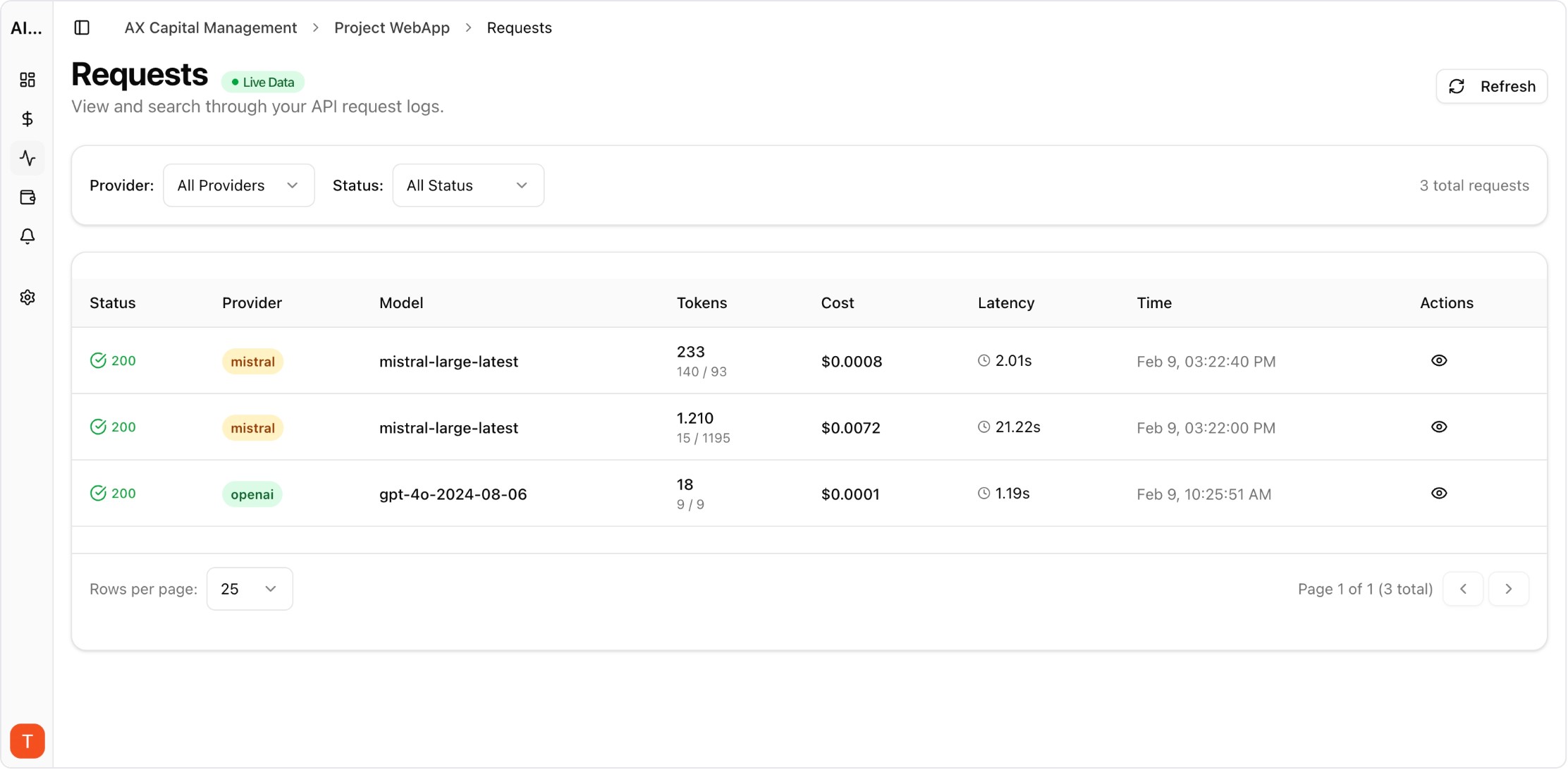

Real UI snapshot used to anchor the operational workflow described in this article.

Define total monthly AI budget plus per-provider ceilings. Dual caps prevent overreliance on one vendor when fallback routing increases traffic unexpectedly.

Critical production workflows should have protected budget bands, while lower-priority experiments get flexible caps. Priority-based allocation preserves core user experience under pressure.

Fallback decisions can change token economics significantly. Simulate expected spend impact for each fallback route before activating policies globally.

Use a blended metric across providers so teams evaluate real operating efficiency rather than comparing isolated invoice totals without context.

Review burn rate weekly and rebalance traffic when providers exceed planned share. Frequent checkpoints reduce the need for disruptive end-of-month interventions.

Provider budgeting should feed contract negotiation with evidence on traffic share, reliability, and cost trends. Procurement gains leverage when data is granular and recent.

LLM Cost Optimization Guide: 11 Tactics to Reduce AI Spend Without Losing Quality

cost-optimization · framework

LLM Cost per Support Ticket: How to Track and Lower AI Service Margins

cost-optimization · commercial

AI Feature Unit Economics Framework for SaaS and Agency Teams

cost-optimization · framework

Token Budgeting for RAG Systems: Control Context Size Without Losing Accuracy

cost-optimization · problem

Unified multi-provider budgeting keeps architecture flexibility financially sustainable. Use provider-level analytics and alerting to balance resilience, quality, and spend every week.