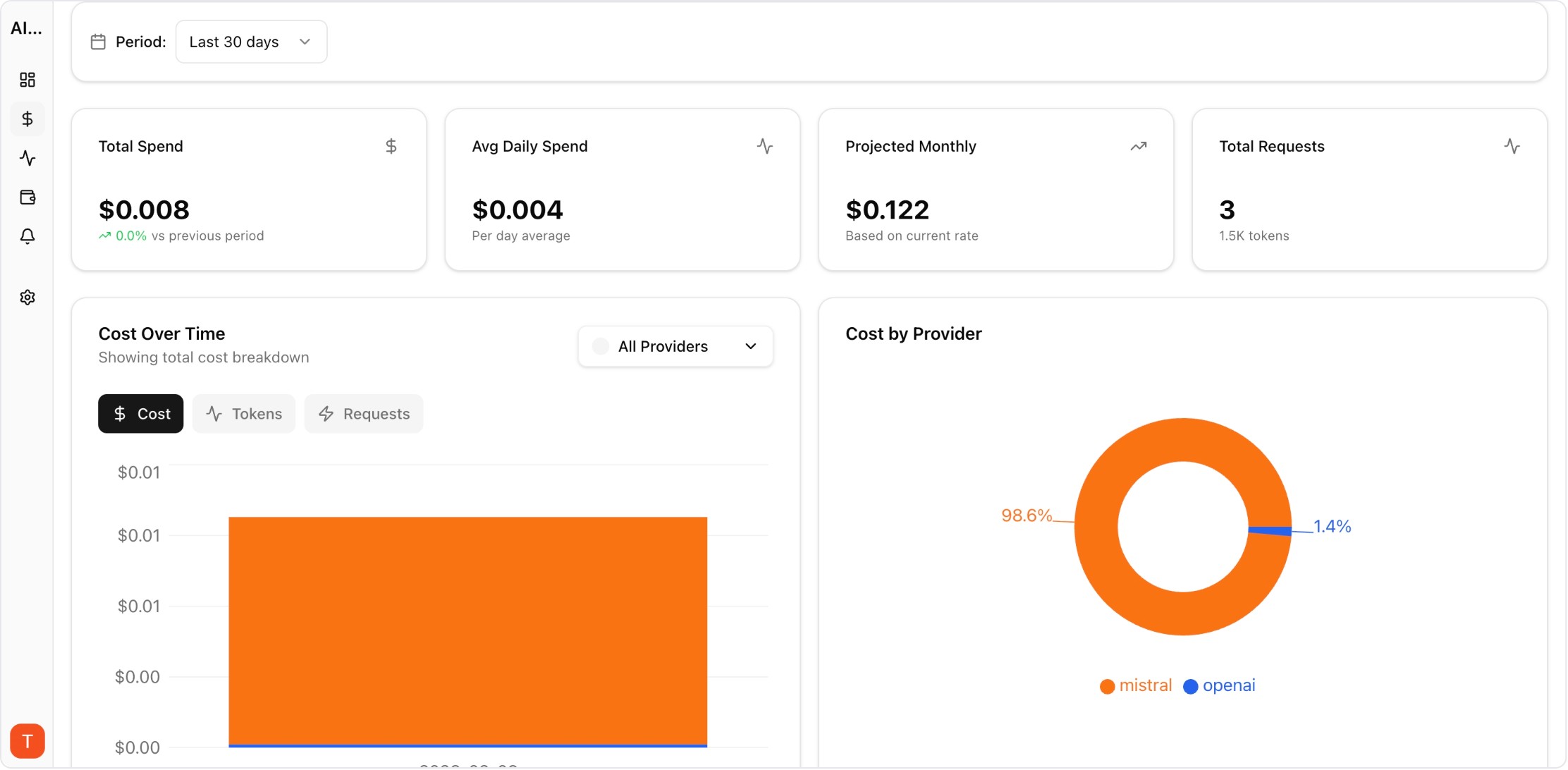

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

Agencies running AI features for many clients face a dual challenge: standardized operations with strict client isolation. Without workspace-level observability, one noisy account can hide risk across the portfolio.

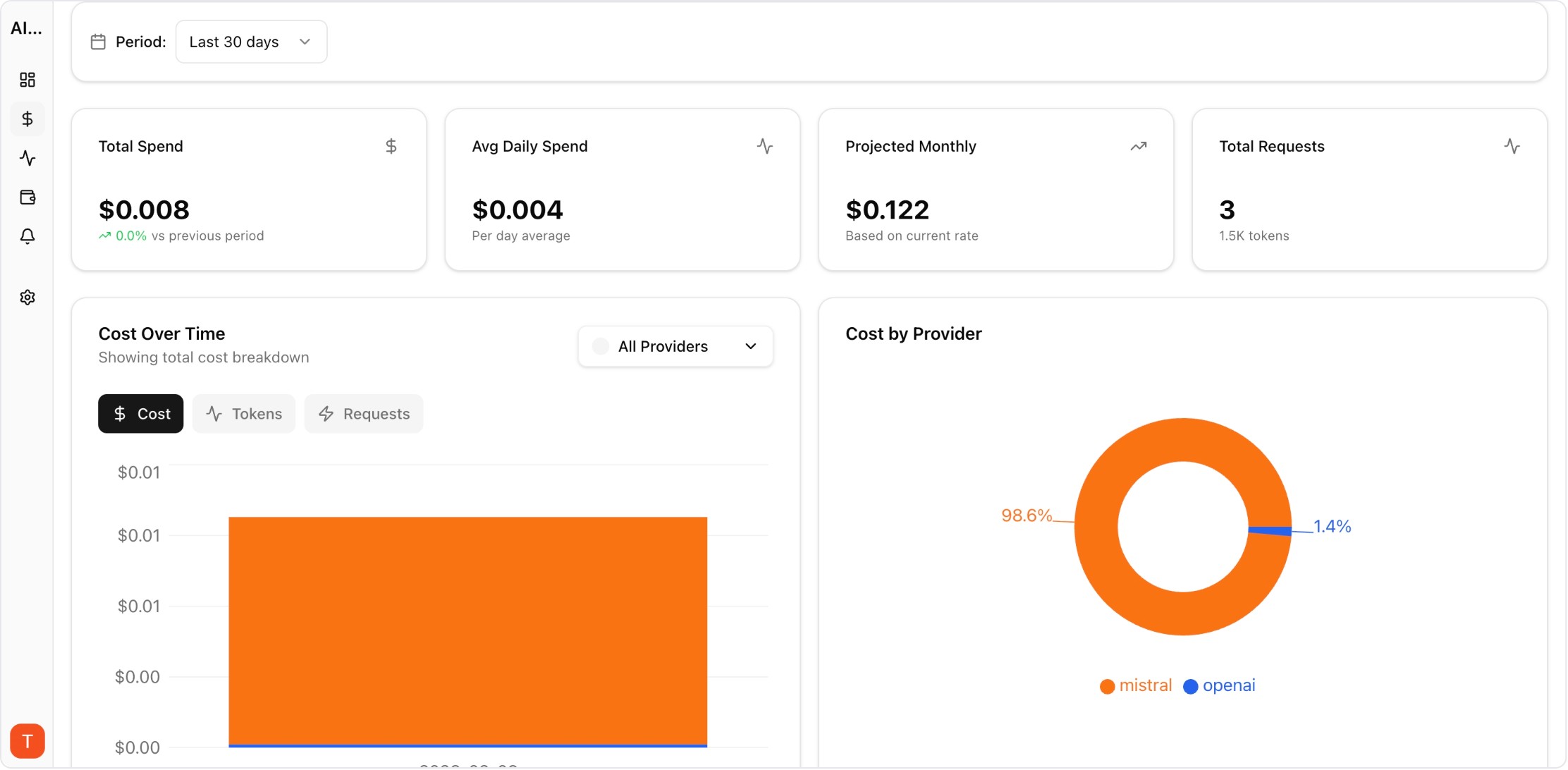

Real UI snapshot used to anchor the operational workflow described in this article.

Every client should have separate workspace boundaries for keys, budgets, and logs. Isolation simplifies reporting and reduces cross-client security and billing risks.

Track a consistent core set across clients: cost per request, p95 latency, error rate, and retry rate. Standardization enables faster troubleshooting and portfolio benchmarking.

Client stakeholders need outcome-level metrics, while engineering teams need request-level diagnostics. Role-specific views improve clarity without exposing unnecessary low-level data.

Assign primary and backup owners per client workspace. Clear ownership prevents delayed response during provider incidents or sudden spend anomalies.

Generate recurring summaries for cost trends, reliability events, and optimization opportunities. Consistent reporting improves trust and supports contract renewal discussions.

Document known fixes for common failure patterns, then apply them across similar client setups. Reusable runbooks turn isolated firefighting into scalable operations.

AI Observability Stack for SaaS Teams: What to Measure Beyond Tokens and Spend

observability · framework

LLM Cost per Support Ticket: How to Track and Lower AI Service Margins

cost-optimization · commercial

Prompt Versioning for Cost Control: Stop Silent Token Creep in Production

governance · commercial

LLM Retry Policy Cost Impact: How Backoff Rules Change Your AI Bill

provider-strategy · commercial

Agency AI operations scale when monitoring is both standardized and isolated. Workspace-aware dashboards and alerts provide the control needed for reliable multi-client delivery.