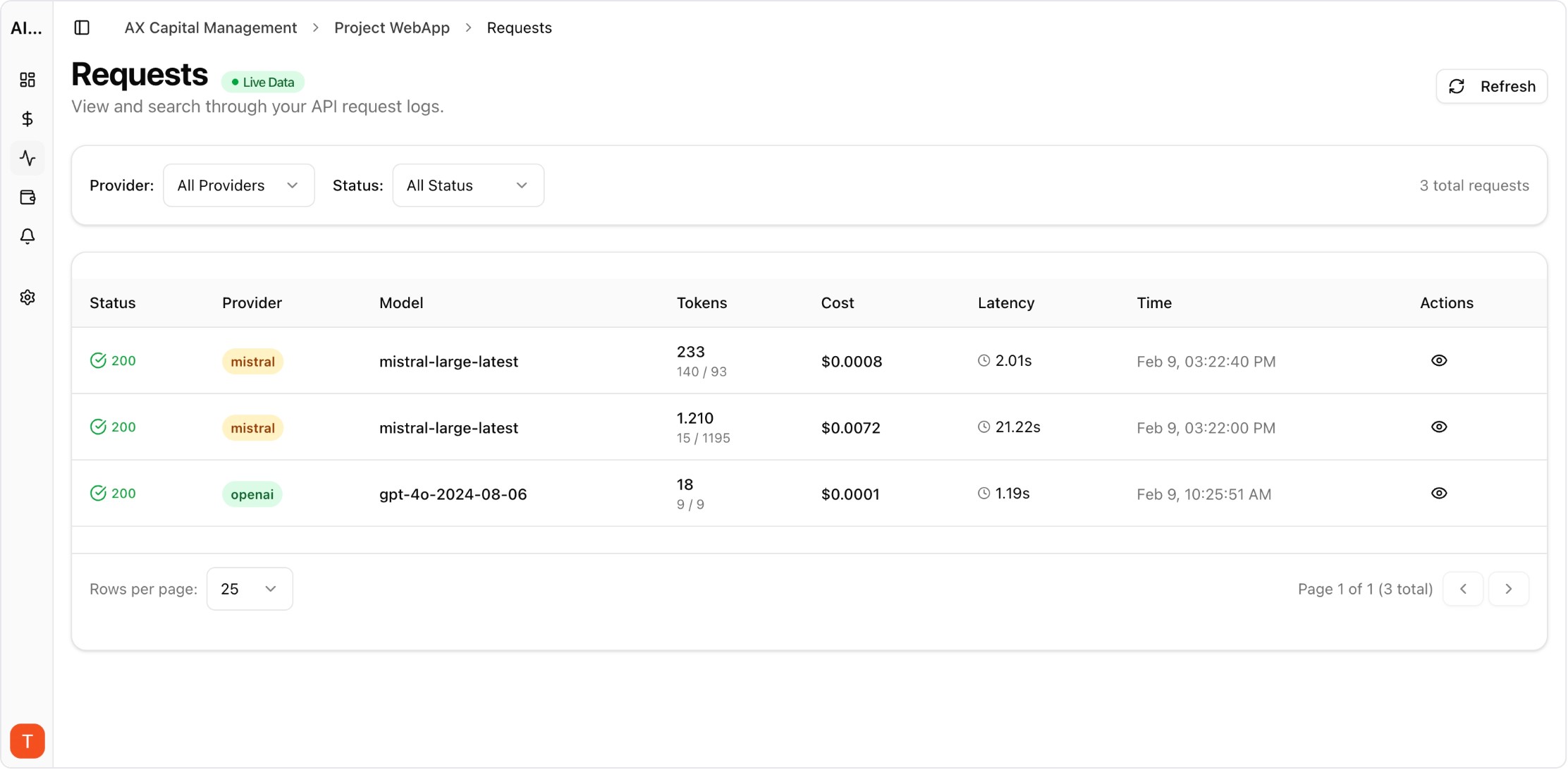

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

As enterprise AI adoption scales from experiments to production, ungoverned LLM API access creates security, cost, and compliance risks. Teams spinning up API keys without approval, developers testing with production keys, and no rate limiting on internal tools — these governance gaps can result in security incidents and budget overruns. Here is how to implement LLM governance that enables innovation while managing risk.

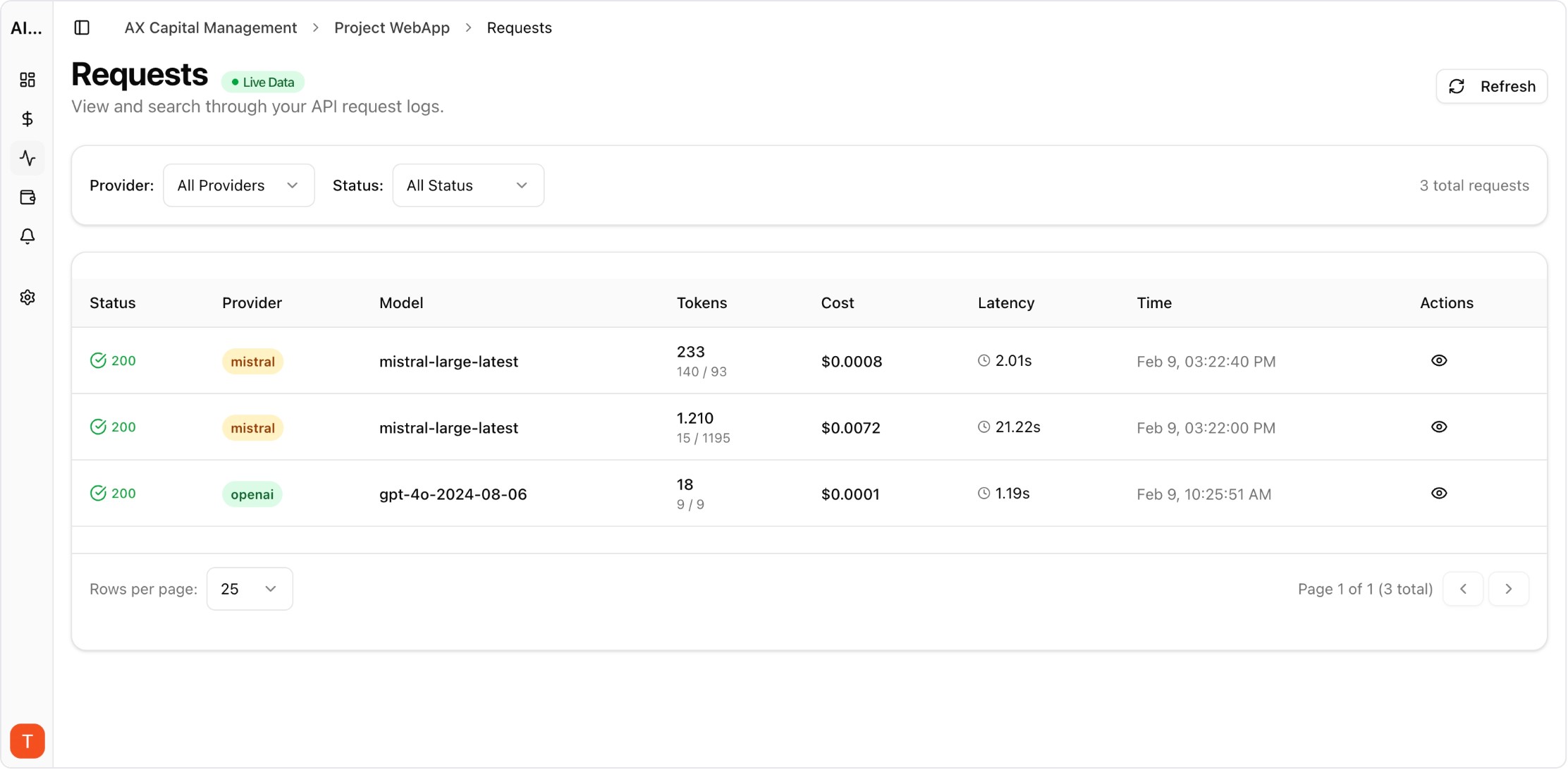

Real UI snapshot used to anchor the operational workflow described in this article.

LLM governance encompasses the policies, controls, and tooling that manage how an organization uses LLM APIs. This includes: who can create and use API keys, what models are approved for which use cases, spending limits per team and project, rate limiting to prevent abuse, and audit trails for compliance. Without governance, organizations face uncontrolled costs, data leakage, and compliance violations.

Best practices for API key governance: (1) Centralize key creation through an approval workflow. (2) Use separate keys per project and team for attribution. (3) Set expiration dates on all keys. (4) Implement key rotation schedules. (5) Never embed keys in client-side code. (6) Monitor key usage for anomalies. AI Cost Board provides key-level cost tracking that supports these governance practices.

Rate limiting prevents both accidental overuse and abuse: Set per-key rate limits based on expected usage patterns. Implement per-user rate limits for internal tools. Add per-minute and per-day caps to prevent runaway costs. Configure gradual backoff rather than hard blocks. Monitor rate limit hits to identify teams that need higher limits vs those that have runaway processes.

Financial governance for LLM usage: Set per-project monthly budget limits with alerts at 50%, 80%, and 100% thresholds. Require approval for budget increases above defined thresholds. Implement per-team spending dashboards for visibility. Review and approve new model usage (especially expensive models like GPT-4 or Claude Opus). Use AI Cost Board for real-time budget monitoring and alerting.

Enterprise compliance requires: logging all API key creation and usage, tracking which models process which data types, maintaining cost attribution records for financial audits, and providing evidence of governance controls for SOC2/ISO audits. AI Cost Board provides the cost governance layer with full audit trails, complementing security and compliance tools.

LLM Cost Optimization Guide: 11 Tactics to Reduce AI Spend Without Losing Quality

cost-optimization · framework

AI Observability Stack for SaaS Teams: What to Measure Beyond Tokens and Spend

observability · framework

AI Feature Unit Economics Framework for SaaS and Agency Teams

cost-optimization · framework

Prompt Versioning for Cost Control: Stop Silent Token Creep in Production

governance · commercial

LLM governance is not about restricting AI usage — it is about enabling safe, cost-effective scaling. Start with API key management and budget controls, then add more sophisticated governance as your AI footprint grows.