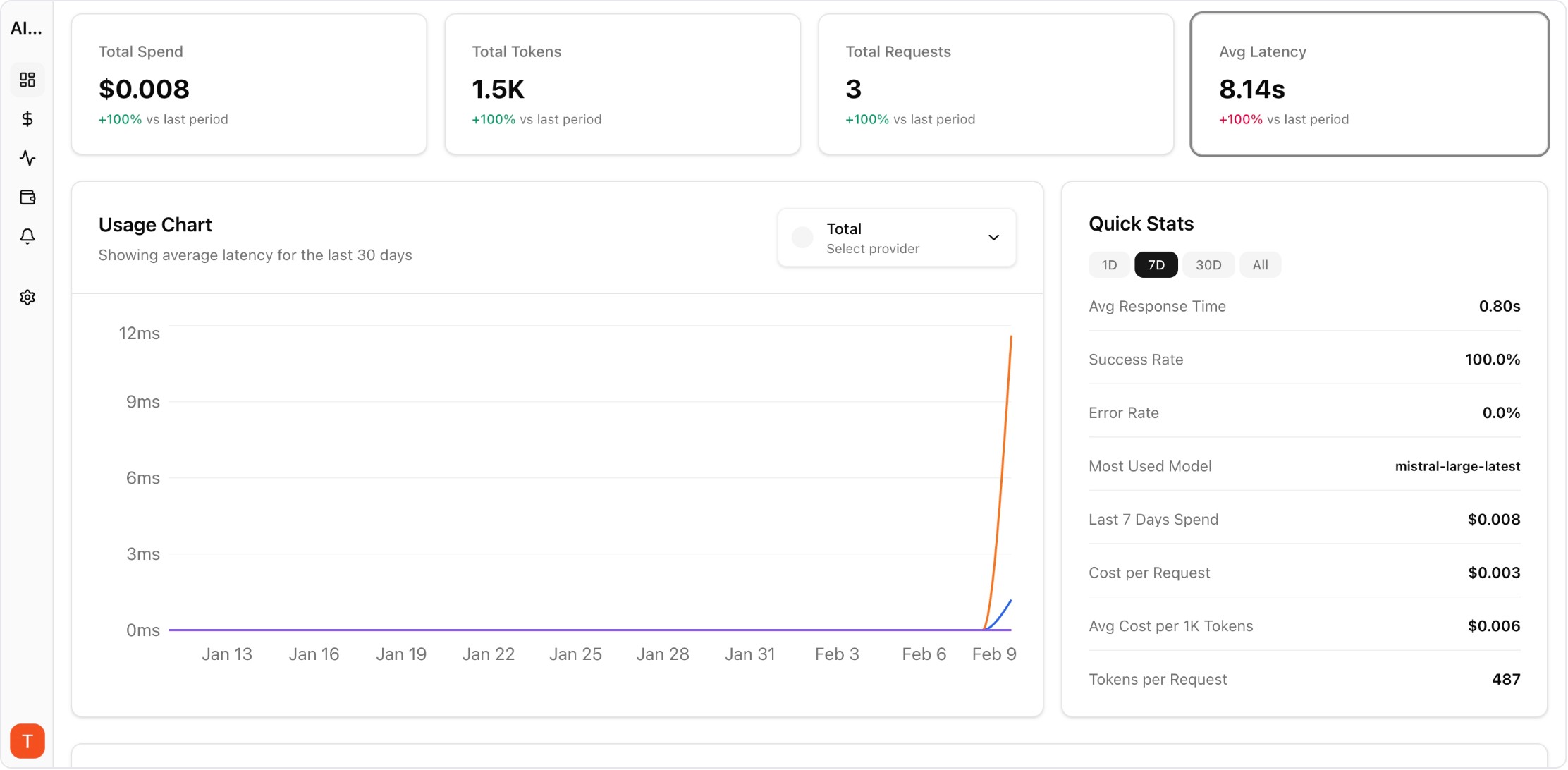

Proof from the product

Real UI snapshot used to anchor the operational workflow described in this article.

Enterprise AI adoption is outpacing the governance frameworks designed to manage it. FinOps principles — which transformed cloud cost management — offer a proven framework for AI cost governance. By applying visibility, optimization, and operational disciplines to LLM spending, enterprises can scale AI adoption while maintaining financial control. This guide adapts FinOps best practices specifically for enterprise LLM operations.

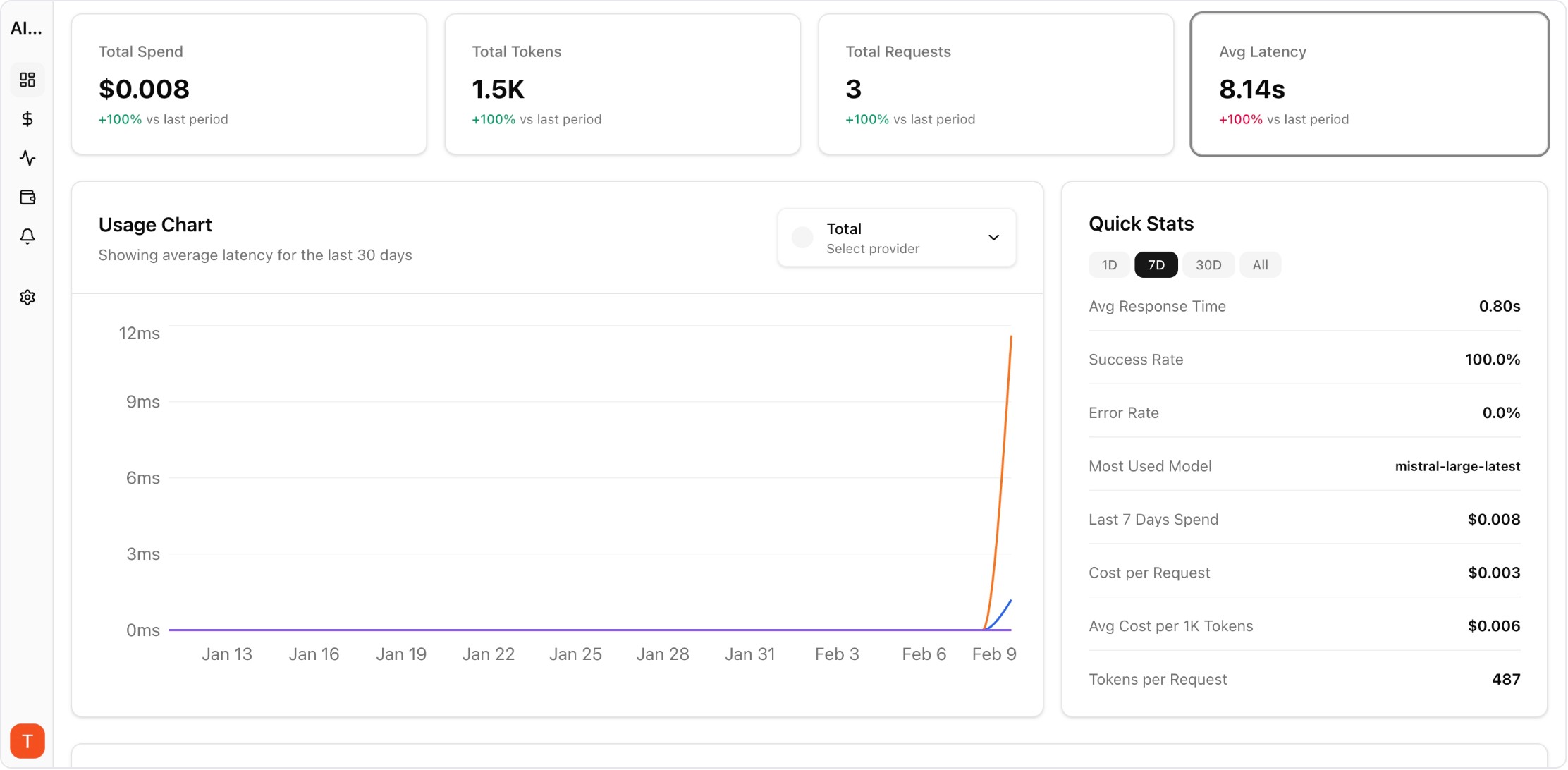

Real UI snapshot used to anchor the operational workflow described in this article.

AI Cost FinOps applies financial operations principles to AI API spending. The three pillars are: Inform (create visibility into AI costs across teams and projects), Optimize (identify and act on cost reduction opportunities), and Operate (build governance processes that sustain efficiency). Unlike cloud FinOps which focuses on infrastructure, AI FinOps addresses the unique challenges of usage-based API pricing and non-deterministic workloads.

The foundation of AI FinOps is comprehensive cost visibility. Implement: (1) unified dashboards showing spend by provider, model, project, and team, (2) cost attribution that maps every API call to a business unit or cost center, (3) trend analysis showing spend trajectory, and (4) anomaly detection for unusual patterns. AI Cost Board provides this visibility layer out of the box.

Chargeback models allocate AI costs to the business units that generate them. Start with showback (visibility only) before implementing full chargeback (actual cost allocation). Map API keys or project workspaces to cost centers. Report monthly costs per business unit with enough detail for teams to identify optimization opportunities. This creates accountability without creating friction.

AI cost forecasting requires different approaches than cloud infrastructure forecasting because LLM usage is more variable. Use: (1) trailing 30-day averages with growth factors for baseline forecasts, (2) feature roadmap inputs for step-change predictions, (3) model pricing change scenarios, and (4) confidence ranges rather than point estimates. Review forecast accuracy monthly and adjust models.

Effective governance enables fast AI adoption while preventing cost surprises. Implement tiered controls: (1) self-service access to approved models within budget limits, (2) lightweight approval for new model adoption or budget increases, (3) quarterly reviews of AI spend vs business value. Avoid heavy governance that slows experimentation — the goal is informed usage, not restricted usage.

Assess maturity across five dimensions: Cost Visibility (can you see all AI spend in one place?), Attribution (is every dollar mapped to an owner?), Optimization (do teams actively manage their AI costs?), Forecasting (can you predict next quarter AI spend?), and Governance (are there processes for budget management?). Most enterprises start at level 1-2 and should target level 3-4 within 6 months.

LLM Cost Optimization Guide: 11 Tactics to Reduce AI Spend Without Losing Quality

cost-optimization · framework

AI Observability Stack for SaaS Teams: What to Measure Beyond Tokens and Spend

observability · framework

AI Feature Unit Economics Framework for SaaS and Agency Teams

cost-optimization · framework

Prompt Versioning for Cost Control: Stop Silent Token Creep in Production

governance · commercial

AI FinOps is not about restricting AI usage — it is about enabling informed, accountable AI adoption at enterprise scale. Start with visibility, build toward optimization, and let governance emerge from data-driven practices.