What to measure

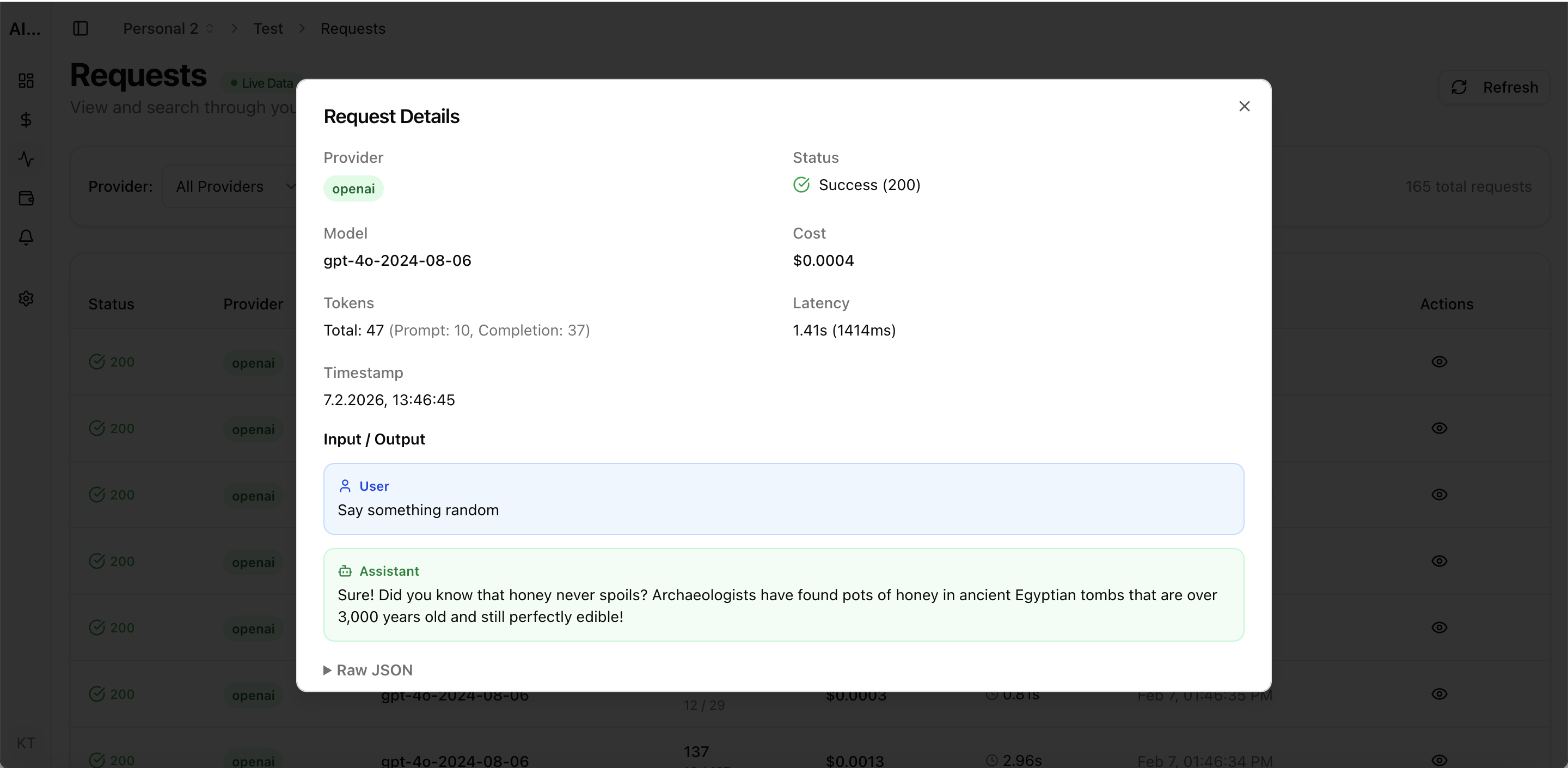

| Metric | Why it matters |

|---|---|

| Cost per active user | Measure monetization fit of copilot features. |

| Cost per action | Find expensive prompts and workflows that hurt margins. |

| p95 latency | Maintain response speed for user trust and adoption. |

| Error rate by feature | Catch regressions after prompt or model changes. |