Problem

Teams running multiple providers struggle with inconsistent metrics, fragmented logs, and slow incident response when each provider is monitored separately.

Monitor OpenAI, Anthropic, Gemini, and other providers in one observability and cost-control workflow.

Teams running multiple providers struggle with inconsistent metrics, fragmented logs, and slow incident response when each provider is monitored separately.

| Area | What good looks like |

|---|---|

| Problem signal | Teams running multiple providers struggle with inconsistent metrics, fragmented logs, and slow incident response when each provider is monitored separately. |

| What to measure | Requests, tokens, cost, latency, errors, and provider/model breakdowns |

| Operational proof | Request logs + dashboards + alert history + project-level attribution |

| Decision loop | Weekly review with engineering and finance owners |

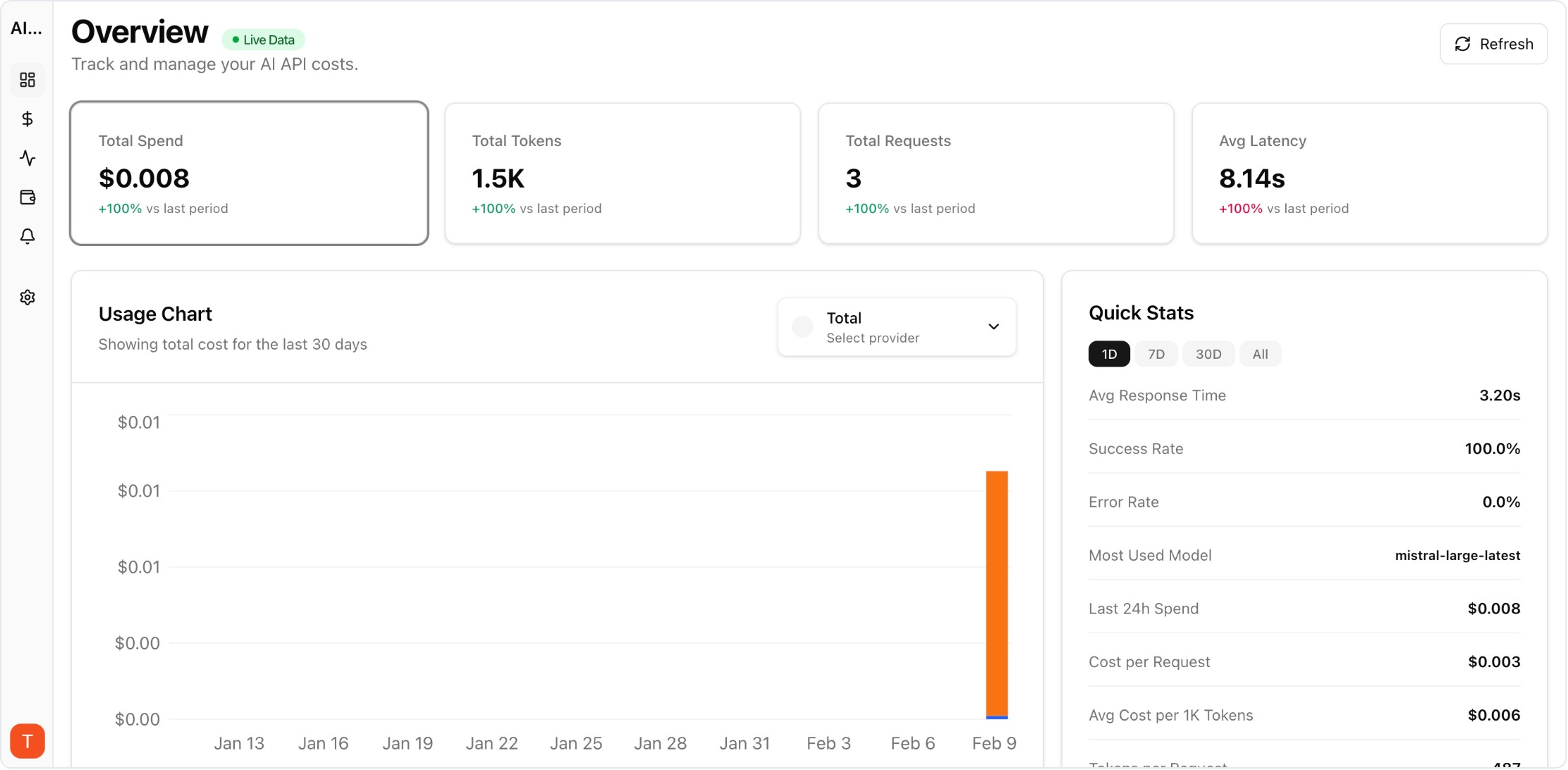

Real UI snapshot from AI Cost Board used in production workflows.

Provider-level drilldowns make cross-provider routing and cost decisions faster.

Monitor cost, tokens, usage, latency, errors, and request logs across providers in one platform.

This page is for engineering, platform, finance, and product teams evaluating AI API observability and cost-control workflows.

No. It covers cost together with usage, latency, errors, and request-level evidence so teams can make safer production decisions.

Yes. AI Cost Board combines dashboards, request logs, provider analytics, and budget controls for this use case.